Why Fast Inference is Critical for Supply Chains

Global supply chains are often visualized as clean, static lines connecting point A to point B across a map. In reality, they are deeply chaotic, highly sensitive biological systems. They are subjected to a relentless barrage of external variables: unexpected port closures, sudden geopolitical shifts, localized labor strikes, and increasingly violent weather events driven by climate change. When you are moving millions of tons of physical goods across oceans and continents, stability is an illusion. The only actual constant is disruption. How an enterprise handles that disruption dictates not only their economic survival but their carbon footprint.

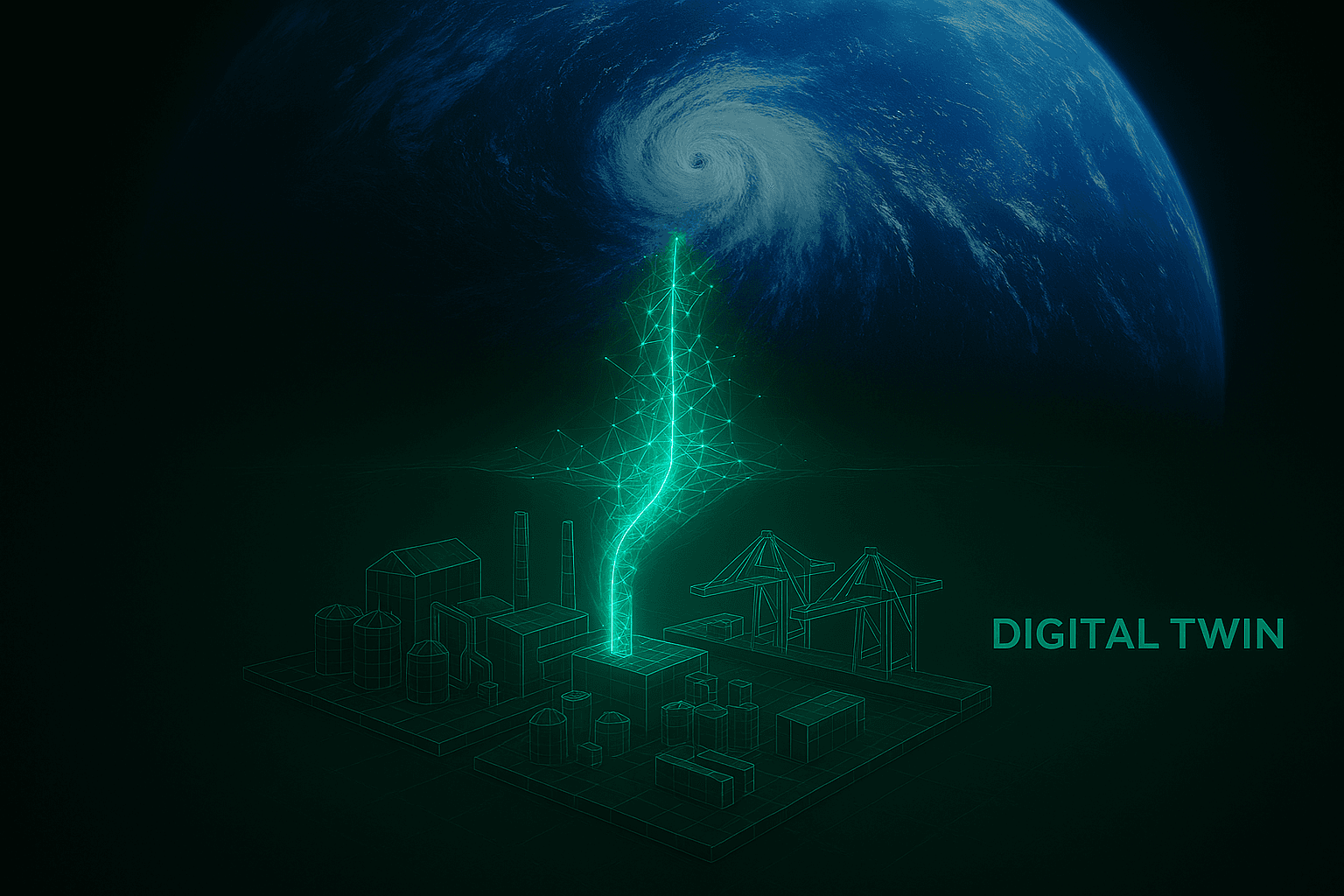

For decades, the logistics industry has relied on batch-processing algorithms to solve these routing disruptions. These legacy systems require massive datasets to be gathered, cleaned, and fed into CPU-bound servers that grind through complex variations of the traveling salesperson problem. The fatal flaw in this architecture is latency. In global logistics, a routing decision that takes four hours to compute is entirely useless. By the time the algorithm outputs the optimal path to avoid a developing hurricane in the Atlantic, the cargo ship has already traveled miles in the wrong direction, burned thousands of gallons of excess bunker fuel, and potentially missed its tight docking window at the destination port. In the physical world, compute latency translates directly into physical waste.

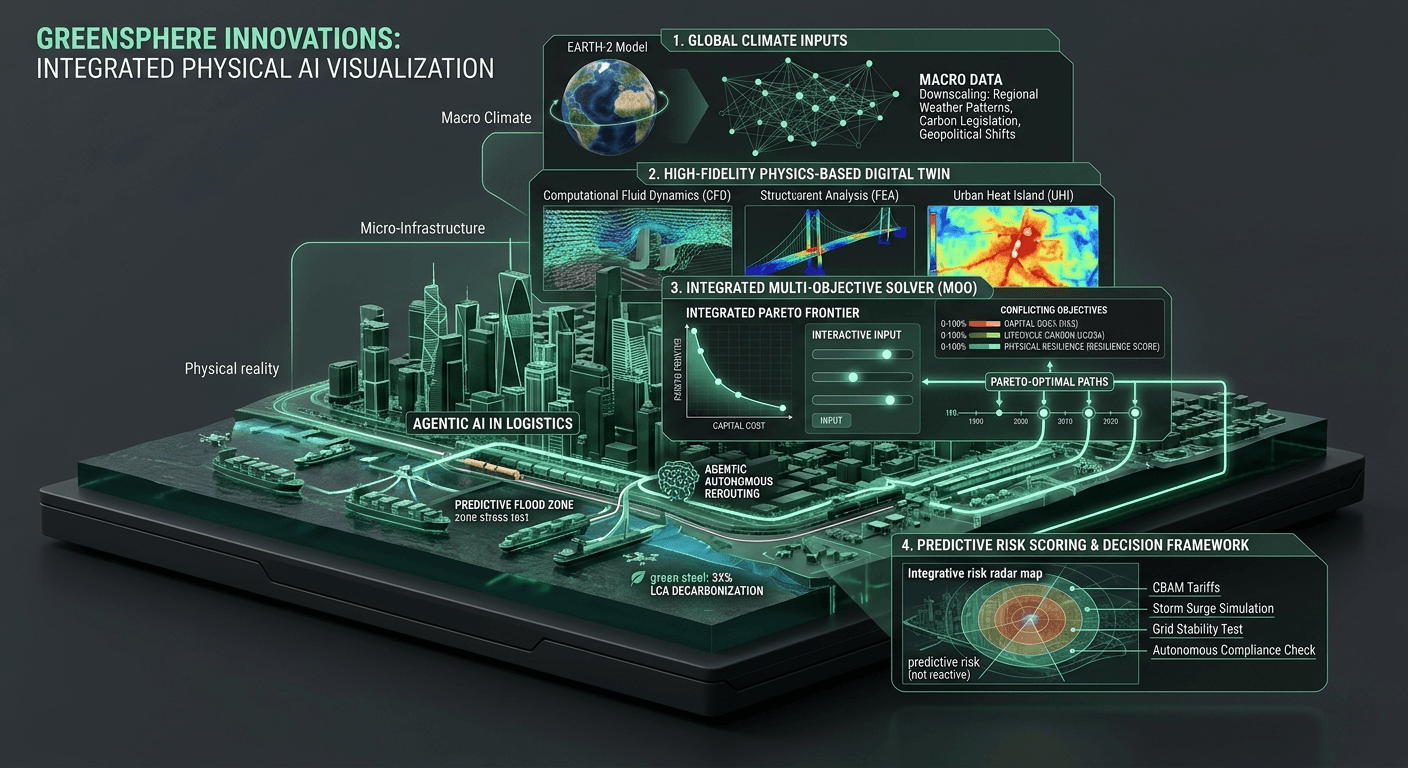

This brings us to the concept of inference, and why the speed at which it occurs is the defining metric for the future of sustainable logistics. In artificial intelligence, inference is the moment a trained model applies what it has learned to new, unseen data to make a prediction or a decision. If you have a digital twin of a global supply chain, inference is the exact millisecond the system decides whether to reroute a shipment through the Suez Canal or around the Cape of Good Hope based on a sudden weather alert. When we talk about the computational bottleneck in the built environment, this is exactly where it chokes. Traditional models simply cannot perform complex, multi-objective inference fast enough to be useful in a rapidly changing physical environment.

Breaking this bottleneck requires a fundamental shift from localized, CPU-heavy operations to massively parallel GPU-accelerated computing. At GreenSphere Innovations, we view inference latency as a strictly unacceptable operational risk. By deploying advanced GPU architectures and utilizing frameworks like NVIDIA NIM microservices, we drop the inference latency for complex, global routing decisions from hours to sub-second levels. We are talking about the ability to ingest real-time meteorological data, calculate the carbon intensity of thousands of potential alternative routes, evaluate the structural logistics of destination ports, and output a Pareto-optimal decision before a ship's captain even finishes pouring a cup of coffee.

It is crucial to understand that fast inference is not merely a tool for protecting profit margins; it is a fundamental requirement for decarbonization. Inefficient routing is one of the largest hidden carbon sinks on the planet. Idling container ships waiting outside congested ports, airplanes circling in holding patterns, and heavy freight trucks taking longer terrestrial routes because of outdated traffic data all contribute to a massive, unnecessary release of greenhouse gases. When we optimize inference speed, we enable micro-corrections. Instead of making massive, costly course corrections after a problem has fully materialized, a low-latency system can make dozens of tiny, highly efficient adjustments to a route in absolute real-time, keeping carbon emissions strictly aligned with ESG targets.

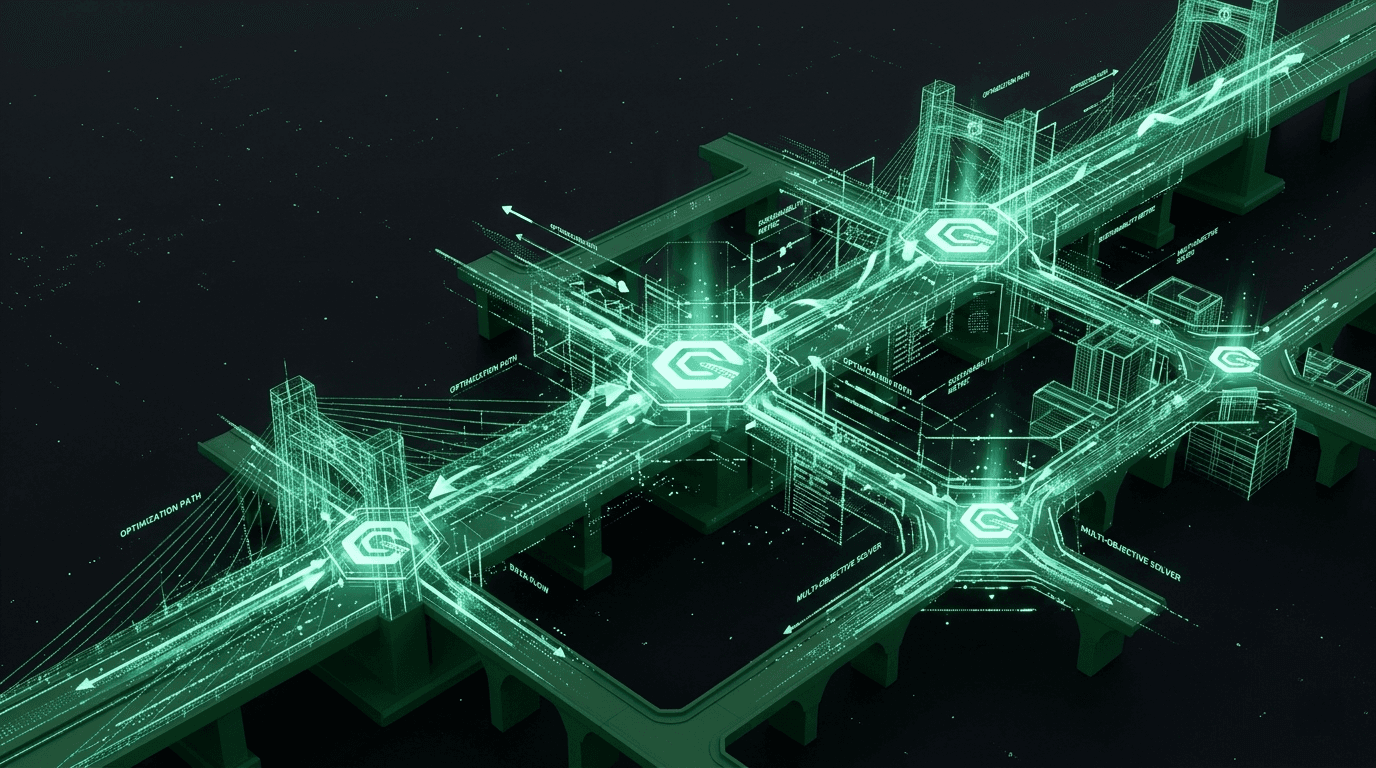

But fast inference alone is only half of the equation. Having the answer instantly does not help if it takes a human operator three hours to approve and implement the change. This is why GreenSphere is integrating Agentic AI into our digital twin environments. An agentic system doesn't just passively monitor data and flash a warning light on a dashboard. It acts as an autonomous logistical engineer. Armed with sub-second inference capabilities, our agentic workflows can instantly negotiate new transit windows, update fuel consumption projections, and actively reroute the physical asset without requiring human intervention. It transforms a reactive supply chain into a living, proactive network.

We are building the engine to run the future of global trade. We cannot expect to build sustainable, resilient supply chains if our digital infrastructure is slower than the physical weather patterns disrupting it. By prioritizing GPU-accelerated, sub-second inference, we are giving enterprise logistics teams the one resource they have never had: the ability to outpace chaos. It is time to stop reacting to the world and start optimizing it in real-time.