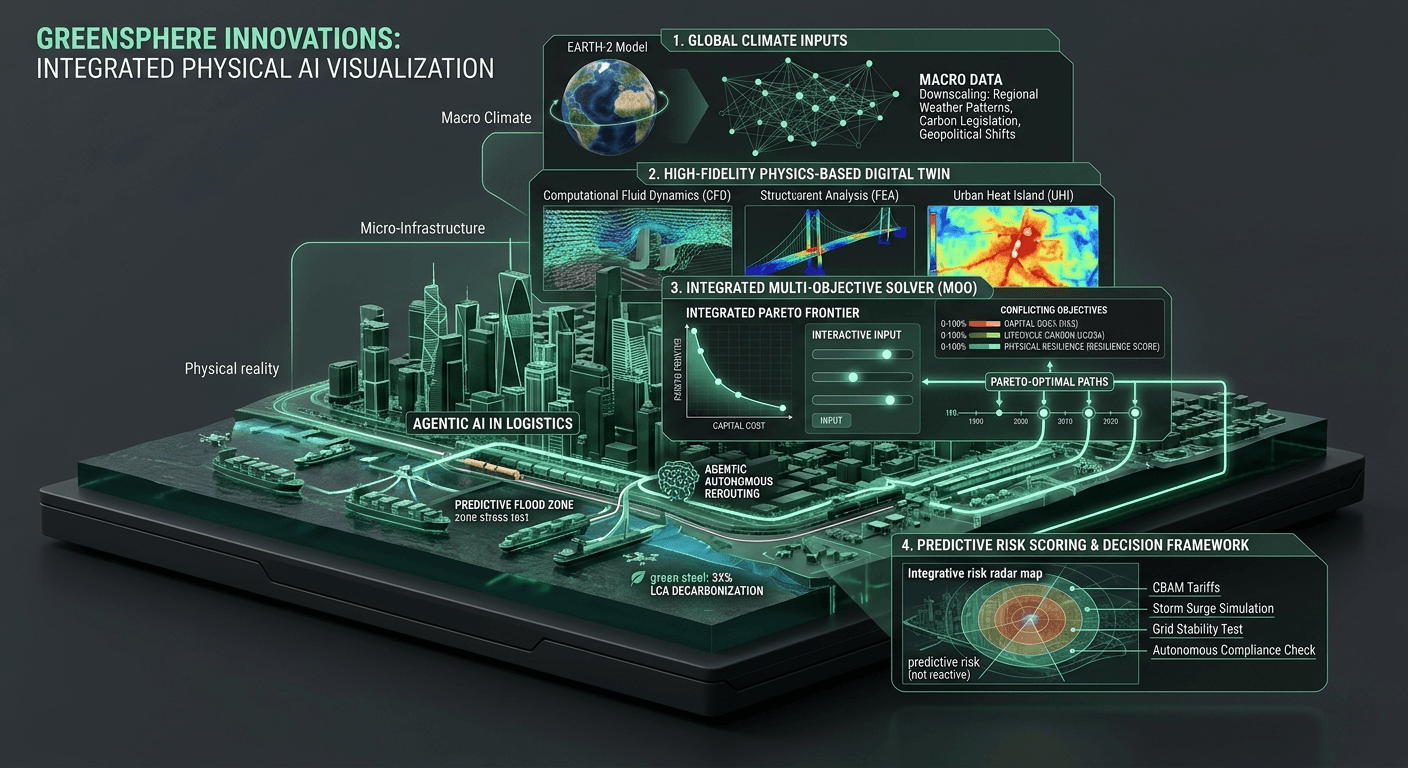

Macro to Micro: Connecting Global Climate Models to Local Infrastructure

The climate crisis is a global phenomenon, but its destruction is intensely local. When we talk about rising sea levels, atmospheric rivers, or shifting tectonic stresses, we are describing planetary-scale events. But when a coastal seawall collapses or a critical supply chain node is paralyzed, the failure happens on a scale of millimeters. The great engineering challenge of the next decade is not just predicting the weather; it is translating planetary-scale climate data into millimeter-scale structural mechanics.

The Macro View: The Promise of Earth-2

Recently, the technology sector took a massive leap forward in understanding the macro-environment. In early 2026, NVIDIA expanded its Earth-2 family of open models, offering an unprecedented, GPU-accelerated software stack for AI weather prediction. As Jensen Huang, the founder and CEO of NVIDIA, aptly stated during the rollout of the Earth-2 initiative, "Climate disasters are now normal... Earth-2 cloud APIs strive to help us better prepare for — and inspire us to act to moderate — extreme weather."

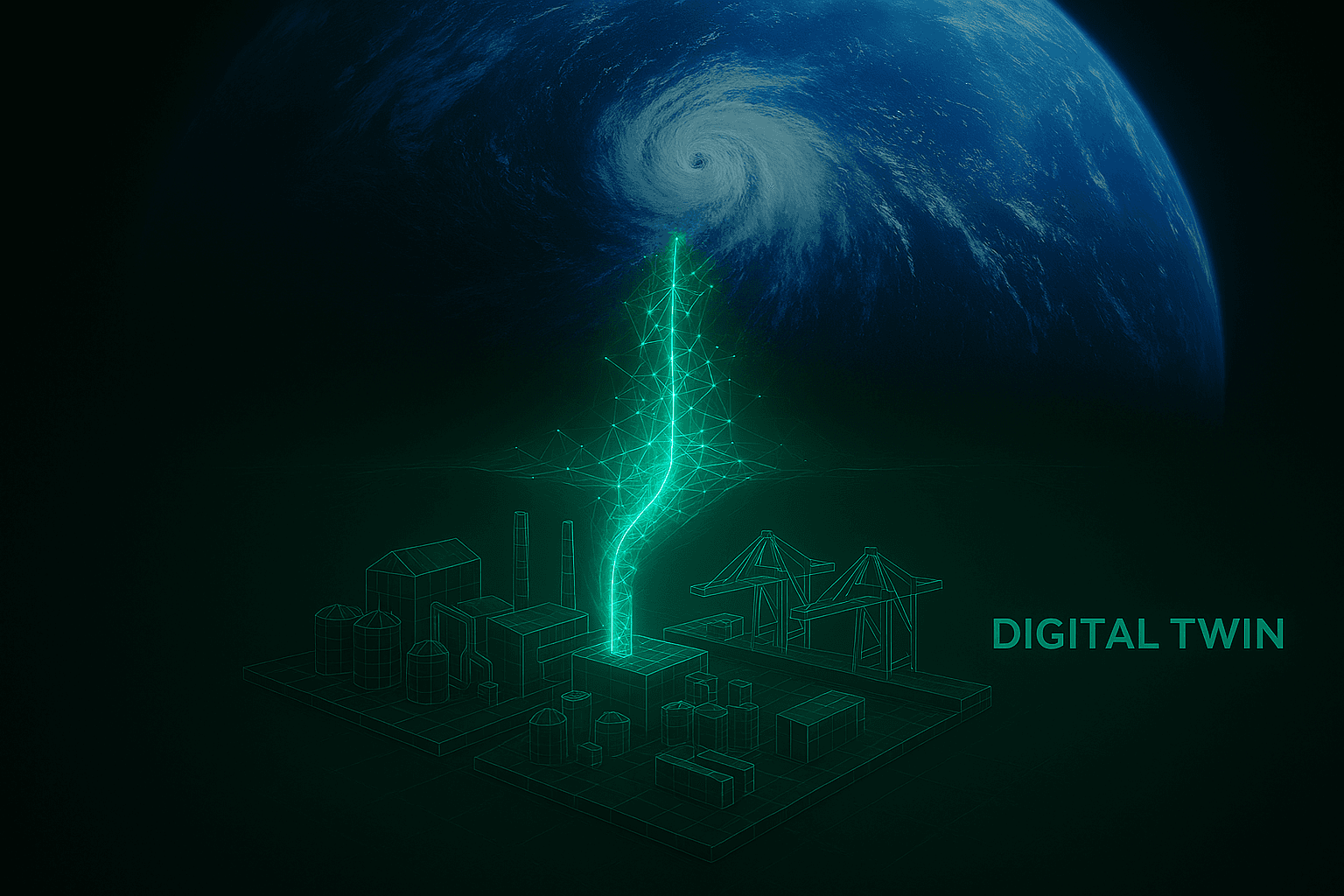

Earth-2 is essentially a digital twin of our planet. By leveraging state-of-the-art generative AI and diffusion modeling, it can predict extreme weather events at a high-resolution, kilometer scale, operating thousands of times faster than traditional CPU-based numerical models. It gives humanity a high-fidelity radar for the future.

However, for civil engineers and supply chain architects, a global weather prediction is only half the battle. Knowing that a Category 5 hurricane is going to strike the Gulf Coast is vital, but that macro-level data does not automatically tell you if a specific steel girder on a logistics hub in Texas is going to snap under the resulting aerodynamic shear.

The Downscaling Bottleneck

This disconnect represents a massive computational bottleneck in the built environment. Global climate models operate in the realm of atmospheric physics and massive meteorological grids. Heavy infrastructure, on the other hand, operates in the realm of statics, thermodynamics, and finite element analysis (FEA).

Historically, these two distinct worlds did not communicate efficiently. A city planner or logistics manager would look at a generic, regional climate forecast and attempt to manually apply a standardized "Factor of Safety" to a new building's design or a routing schedule. This broad-brush, disconnected approach is exactly why we end up with bloated, over-engineered infrastructure that burns through unnecessary embodied carbon, or dangerously under-engineered structures that fail catastrophically during unprecedented, hyper-localized weather anomalies.

We cannot build resilient, sustainable infrastructure by simply guessing how a macro-climate event will impact a micro-structural node. We need a system that seamlessly ingests planetary data and instantly converts it into actionable structural mathematics.

Agentic Engineering and the Local Digital Twin

At GreenSphere Innovations, our focus is squarely on this integration layer. My professional background is not in programming the foundational, low-level machine learning algorithms that predict the global weather; it is in systems architecture and civil engineering. Our mission is to build the environment where those massive, macro-level AI predictions can actively interact with the heavy, physical realities of the built environment.

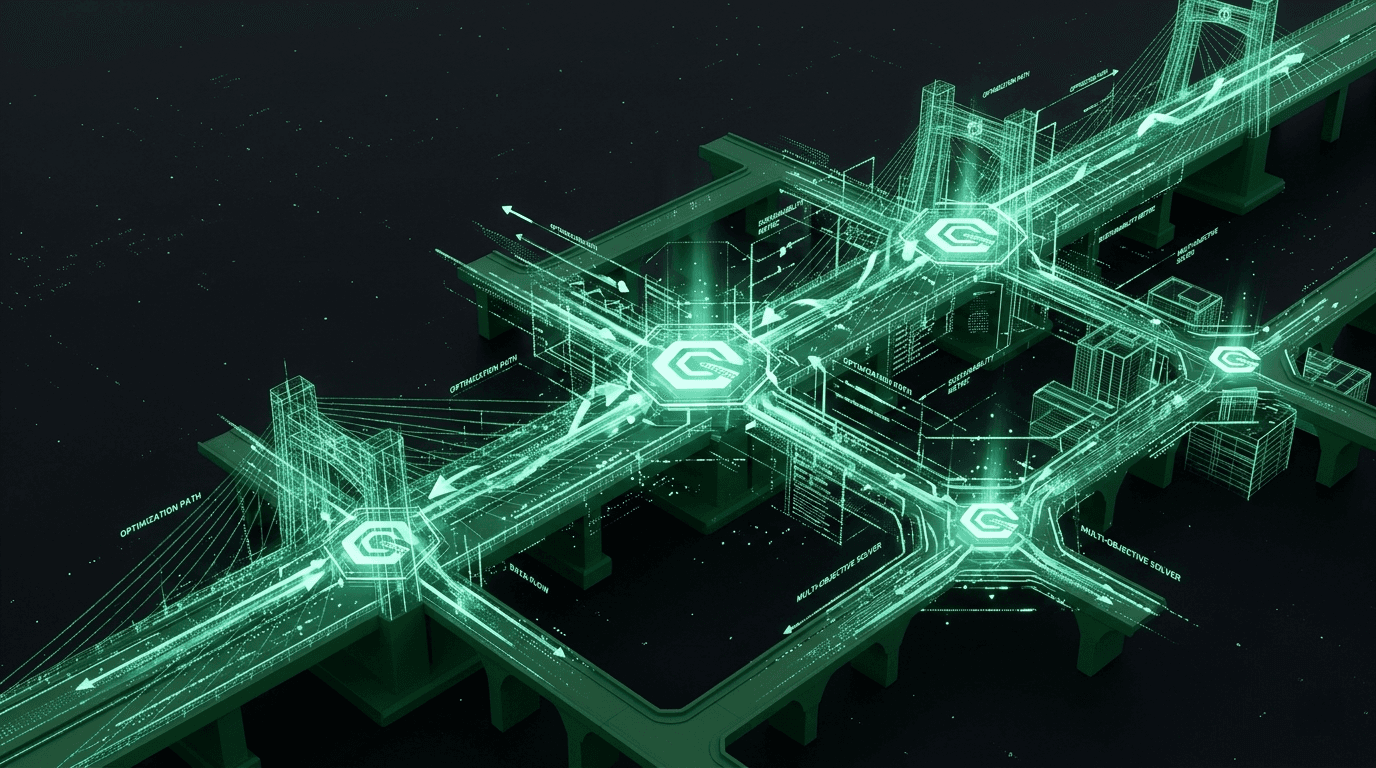

We utilize agentic engineering to bridge this gap. Within the GreenSphere platform, we build hyper-localized, physics-based digital twins of specific enterprise infrastructure assets—whether it is a transit hub in New York or a manufacturing facility navigating supply chain delays. When massive, AI-driven climate models generate an extreme weather prediction, our agentic workflows autonomously ingest that data.

The agents don't just alert a human operator with a red dashboard light; they translate the atmospheric data into localized, physical force vectors and logistical constraints. Powered by our native GPU Inference Core, they run tens of thousands of stress tests against the localized digital twin in real-time. The software simultaneously calculates the physical load on the structure, the cascading supply chain disruptions, and the lifecycle carbon impact of reinforcing the vulnerabilities. We take a global weather anomaly and autonomously turn it into a Pareto-optimal engineering schematic in milliseconds.

The GreenSphere Vision

The future of computational sustainability relies on breaking down the digital silos between atmospheric science, data analytics, and heavy civil engineering. We must build continuous digital pathways that connect the global sky directly to the local concrete.

By leveraging massive, open-source advancements in global AI climate modeling and pairing them with localized, agentic digital twins, GreenSphere Innovations is ensuring that infrastructure planners are never flying blind. We are empowering the enterprise to stop reacting to the global climate, and start engineering precisely for it. The data to save our physical world is finally here; now, we are providing the computational engine to connect it.