The Hidden Carbon Cost of Legacy Engineering Software

When the technology sector discusses the carbon footprint of software, the conversation is almost exclusively limited to the energy consumption of data centers. We talk about the electricity required to cool server racks, the water usage of hyperscale facilities, and the grid demand of training large language models. While these are critical metrics for the tech industry to manage, they miss a much larger, far more insidious problem hidden within the enterprise software ecosystem.

The most catastrophic carbon footprint of software does not come from the electricity it consumes; it comes from the physical waste it forces human beings to build. When it comes to the legacy software used in civil engineering, urban planning, and structural design, the digital latency of the application is directly responsible for millions of tons of excess greenhouse gases being pumped into the atmosphere every year. We are using sluggish, outdated computational architecture to design the built environment, and the planet is paying the price in concrete and steel.

The Trap of the "Factor of Safety"

To understand this hidden carbon cost, you must understand how physical infrastructure is actually engineered. Civil engineering is governed by a principle known as the "Factor of Safety." Because human lives are at stake, structures cannot be designed to merely withstand the exact load they are expected to carry. They must be designed to withstand a mathematical multiple of that load to account for unknown variables, material imperfections, and unpredictable weather extremes.

However, the Factor of Safety is essentially a buffer for human ignorance. The less you know about how a structure will dynamically behave under stress, the higher you must make your safety factor. If an engineer cannot precisely simulate how the complex geometric corners of a high-rise will react to the aerodynamic flutter of a hurricane, they have no choice but to over-engineer the building. They compensate for the digital unknown by applying brute physical force—mandating thicker steel columns, deeper concrete foundations, and heavier structural reinforcements.

Latency is the Enemy of Optimization

Why do engineers have these digital unknowns? It is not a lack of talent or mathematical understanding; it is a profound limitation of their computational tools.

Most enterprise engineering software—ranging from traditional CAD platforms to standard Finite Element Analysis (FEA) simulators—is built on sequential, CPU-bound architecture. Running a high-fidelity, dynamic stress test on a massive physical structure using these legacy systems is agonizingly slow. A single comprehensive simulation can take days to render.

In the fast-paced reality of commercial real estate and infrastructure development, project timelines simply do not allow an engineering team to wait days for a single digital test. If you want to aggressively decarbonize a building by testing a radical new lightweight geometry or a novel sustainable material, you need to run tens of thousands of simulations to find the perfect balance between safety and material efficiency. Because legacy software cannot compute these iterations fast enough, true Multi-Objective Optimization becomes practically impossible.

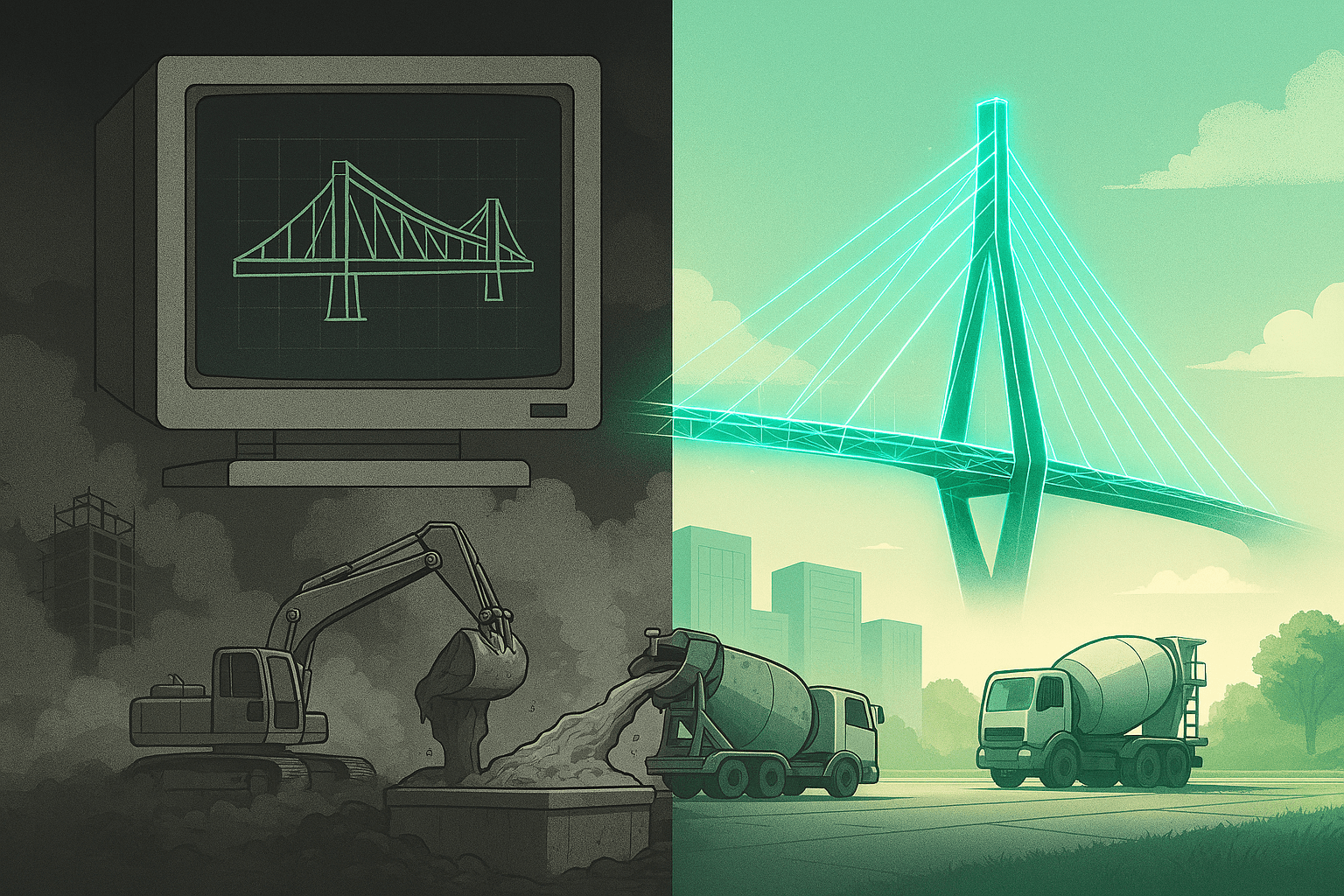

Engineers are forced to settle. They run a handful of basic simulations, find a traditional, heavy design that clears the minimum safety threshold, and "freeze" the design so construction can begin. The software’s latency actively prevents the exploration of low-carbon alternatives.

Translating Digital Slowness to Physical Waste

The environmental consequences of this digital bottleneck are staggering. Cement and steel production are two of the largest industrial sources of carbon dioxide on the planet, accounting for roughly 15% of all global emissions combined.

When slow software forces an engineer to blindly increase the mass of a structure by even 5% to satisfy an overly cautious Factor of Safety, that translates to thousands of tons of completely unnecessary embodied carbon. The concrete didn't need to be poured; the steel didn't need to be forged. It only exists in the physical world because the digital world was too slow to prove it was unnecessary.

This is the hidden carbon cost of legacy engineering software. It is a silent tax levied on the environment, paid in the form of bloated, over-engineered infrastructure.

Breaking the Cycle with GPU Acceleration

We cannot pour our way out of the climate crisis. If we are going to meet our global decarbonization targets, we must absolutely minimize the embodied carbon of every structure we build, without ever compromising human safety. The only way to achieve this is to eliminate the computational latency that causes over-engineering.

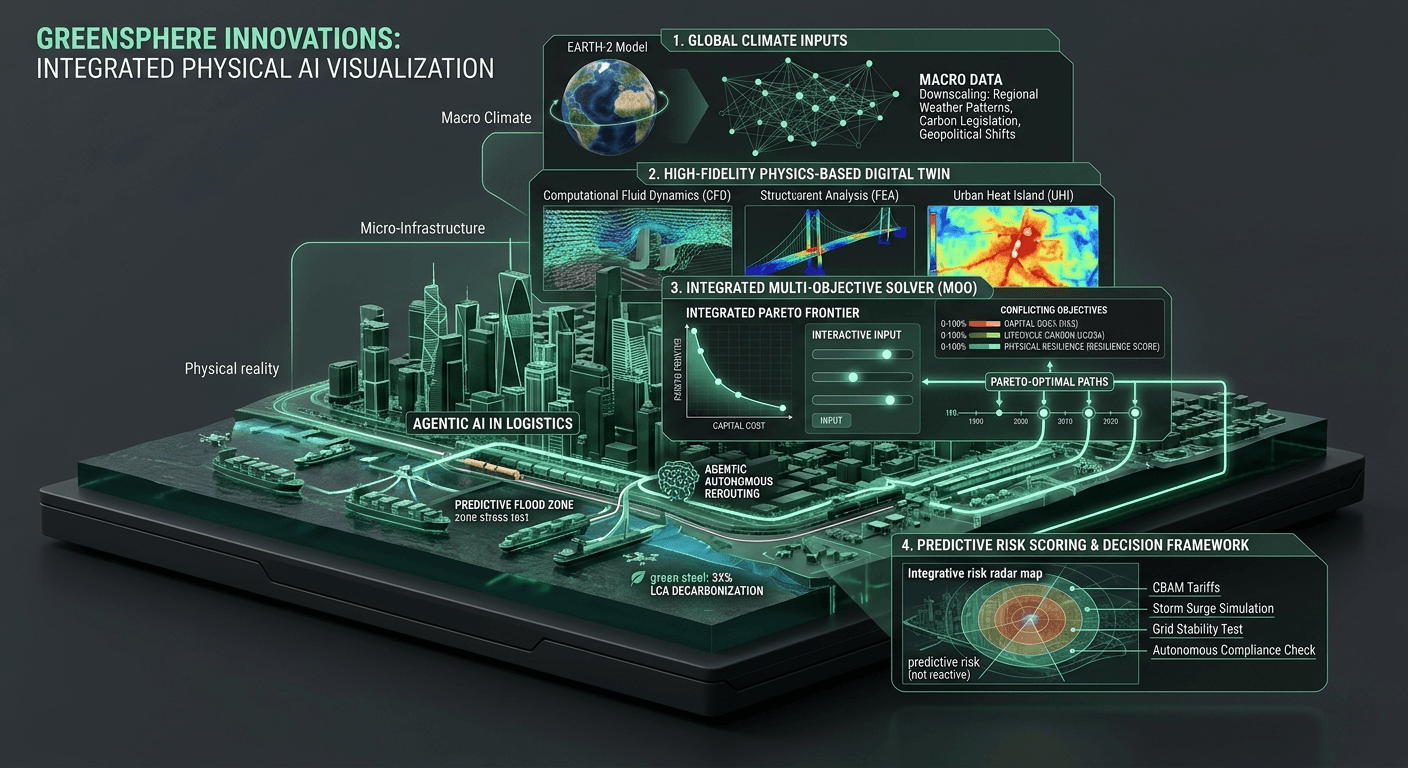

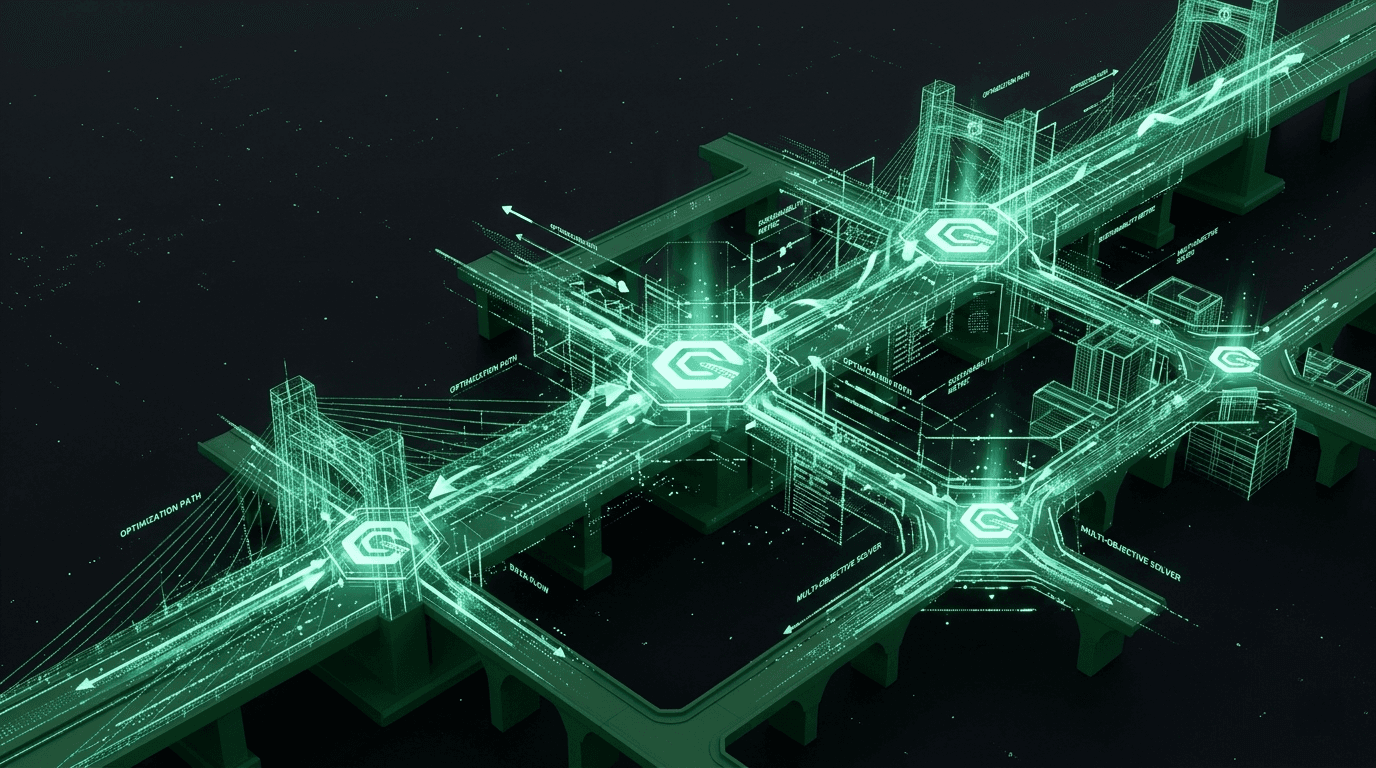

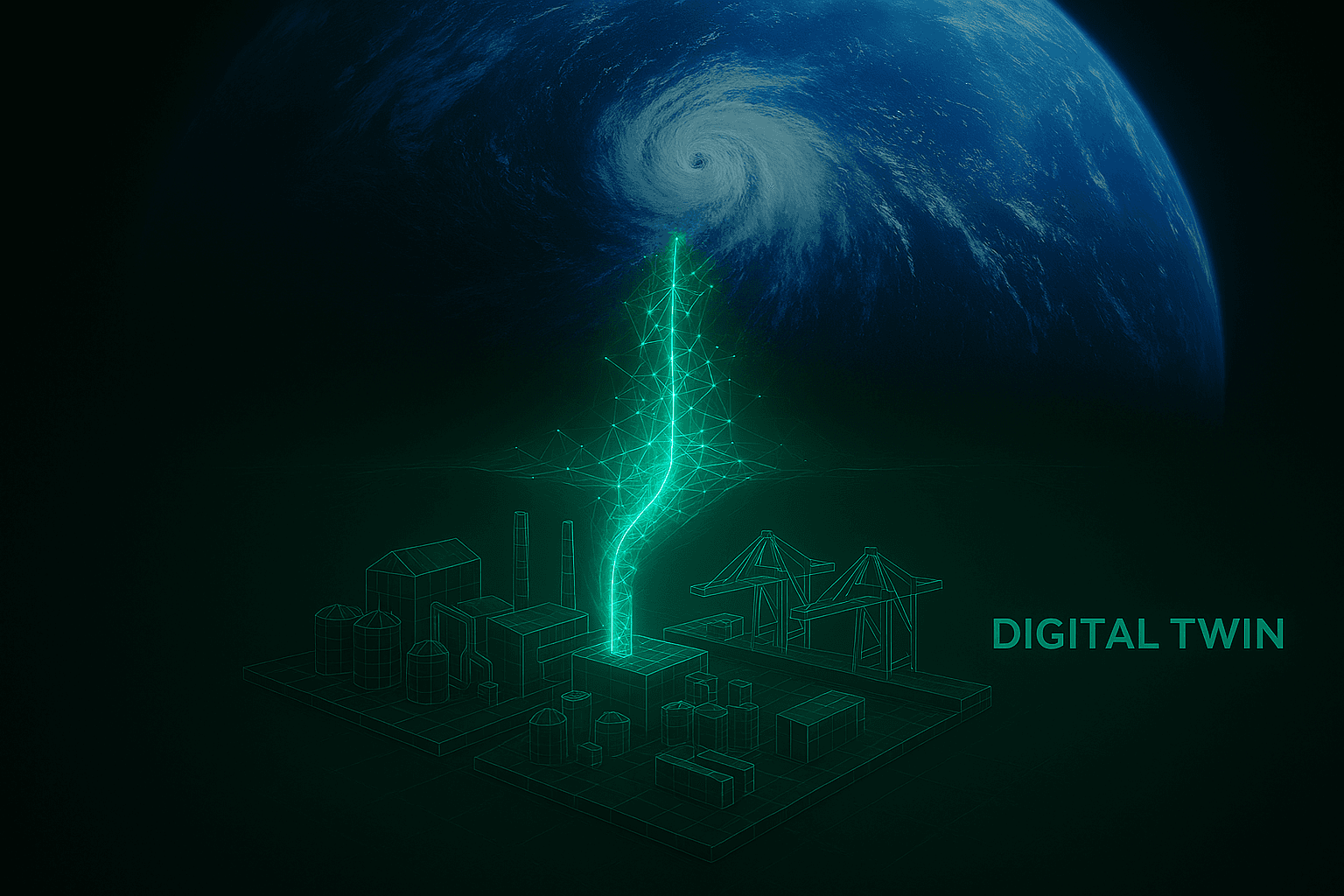

At GreenSphere Innovations, we are attacking this problem at the silicon level. By abandoning legacy CPU architectures and building our simulation engines exclusively on massively parallel GPU Inference Cores, we are changing the speed of reality. We allow engineers to run complex structural and environmental simulations in milliseconds rather than days.

This unprecedented computational velocity unlocks real-time parametric optimization. An engineer can instruct a GreenSphere digital twin to actively search for the absolute mathematical minimum of material required to survive an extreme climate event. The software runs millions of iterations overnight, safely carving away unnecessary mass and presenting a Pareto-optimal design that minimizes both physical risk and carbon output.

The GreenSphere Vision

It is time for the enterprise software industry to take responsibility for its impact on the physical world. Software that designs infrastructure is not just a digital tool; it is the blueprint for our environmental future. We must stop viewing computational speed merely as a convenience for the end-user, and start treating it as a primary weapon in the fight against climate change. By providing the built environment with uncompromising, real-time computational power, GreenSphere Innovations is ensuring that the cities of tomorrow are engineered with mathematical precision, rather than bloated by digital hesitation.