Physics-Based Modeling vs Traditional Analytics

We are currently living through the golden age of big data and predictive analytics. Across nearly every sector of the global economy, enterprise leaders are leveraging massive datasets and machine learning algorithms to find hidden patterns, optimize supply chains, and predict future market behaviors. This flavor of artificial intelligence—driven by statistical correlation and historical extrapolation—has revolutionized software, retail, and digital logistics. However, when we transition from the digital realm into the heavy, unforgiving reality of the physical built environment, a critical translation error occurs. In the world of civil engineering, structural resilience, and heavy physical infrastructure, traditional predictive analytics is not just insufficient; it is fundamentally the wrong tool for the job.

To understand why, we must examine how traditional predictive analytics actually works. At its core, statistical modeling is entirely reliant on historical precedent. An algorithm ingests millions of data points from the past to draw a line of best fit into the future. If a logistics company wants to predict seasonal shipping delays, it feeds the AI ten years of historical transit times, and the algorithm reliably guesses the future based on that past behavior. But what happens when the future looks absolutely nothing like the past? As the effects of climate change accelerate, the historical weather data we have relied upon for a century is becoming obsolete. If a coastal highway has never been subjected to a Category 5 hurricane or a sustained 120-degree heat dome, a predictive algorithm trained only on historical data has no mathematical basis to predict how that highway will fail. It can only guess based on statistical proximity, and in civil engineering, guessing is catastrophic.

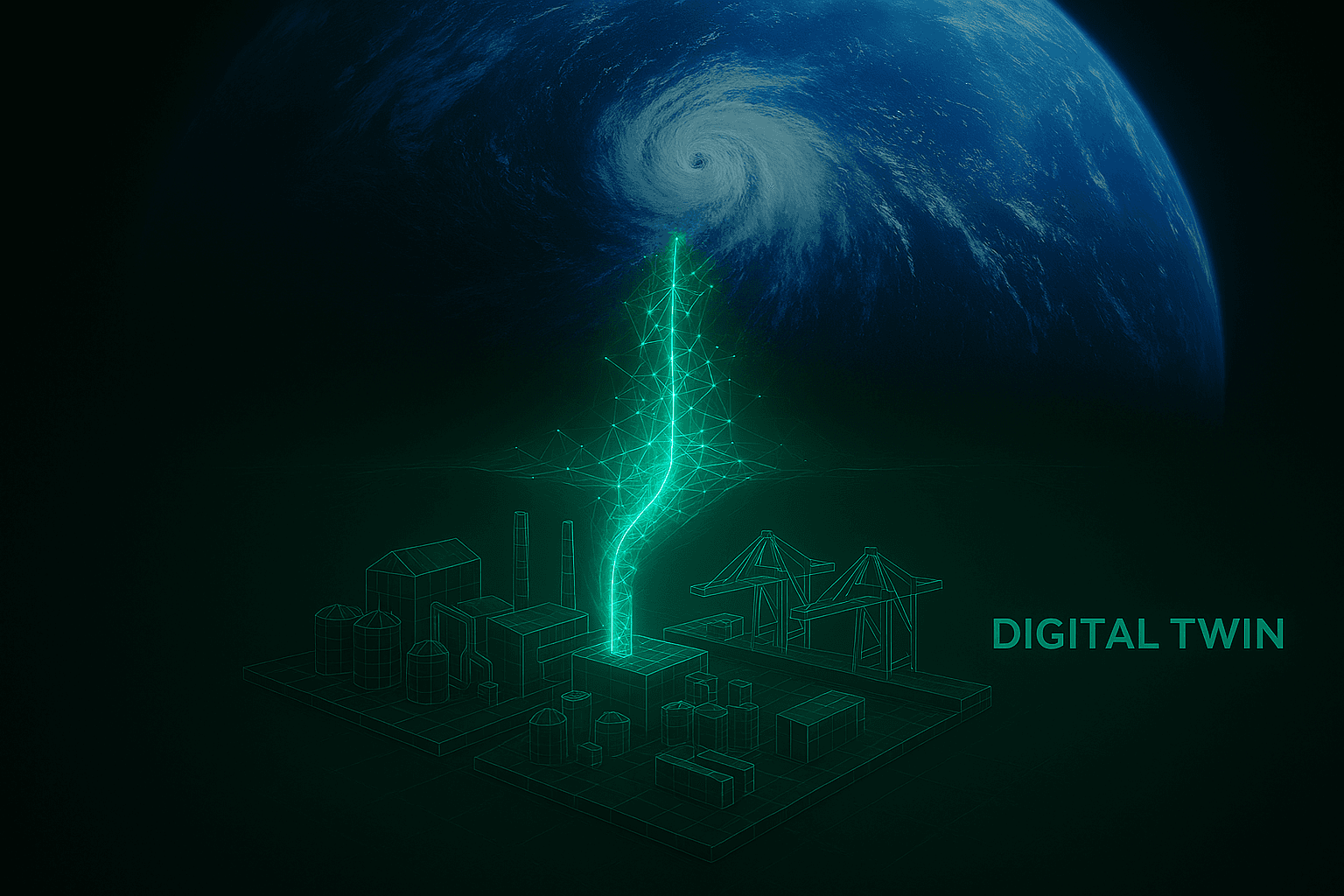

Physics-based modeling operates on an entirely different paradigm. Rather than searching for statistical patterns in historical data, physics-based models calculate outcomes using the absolute, deterministic laws of nature. These systems rely on first principles—Newtonian mechanics, thermodynamics, fluid dynamics, and material science. A physics-based digital twin does not need to look at a spreadsheet of past bridge failures to understand how a bridge will collapse. Instead, it calculates the exact aerodynamic flutter of a specific steel geometry under a specific wind load. It models the precise thermal expansion of a concrete foundation under intense solar radiation. It doesn't predict what usually happens; it calculates exactly what will happen based on the unyielding laws of physics.

Consider the challenge of designing next-generation, low-carbon infrastructure. The goal is to aggressively reduce the amount of embodied carbon in a structure without compromising its safety. If you try to optimize this using traditional analytics, the system will simply look at historical building designs and suggest minor statistical variations. It will give you a slightly more efficient version of the past. Physics-based modeling, however, allows engineers to push into completely uncharted territory. Because the software is calculating the actual stress, strain, and load distributions in real-time, engineers can confidently experiment with radical new geometries and untested, hyper-lightweight sustainable materials. You cannot A/B test a skyscraper in the real world, but inside a physics-based simulation, you can subject unprecedented structural designs to unprecedented climate extremes with absolute mathematical certainty.

The reason physics-based modeling has not completely overtaken traditional analytics in the enterprise software space is straightforward: it is computationally exhausting. Finding a statistical pattern in a database requires relatively little processing power. Conversely, running a high-fidelity Finite Element Analysis (FEA) or Computational Fluid Dynamics (CFD) simulation on a massive, city-scale digital twin requires executing millions of complex differential equations simultaneously. Historically, running these non-linear, dynamic physics calculations on legacy CPU-bound architecture took days or even weeks. It was too slow to be used for agile, real-time decision making, forcing planners to fall back on faster, less accurate statistical models.

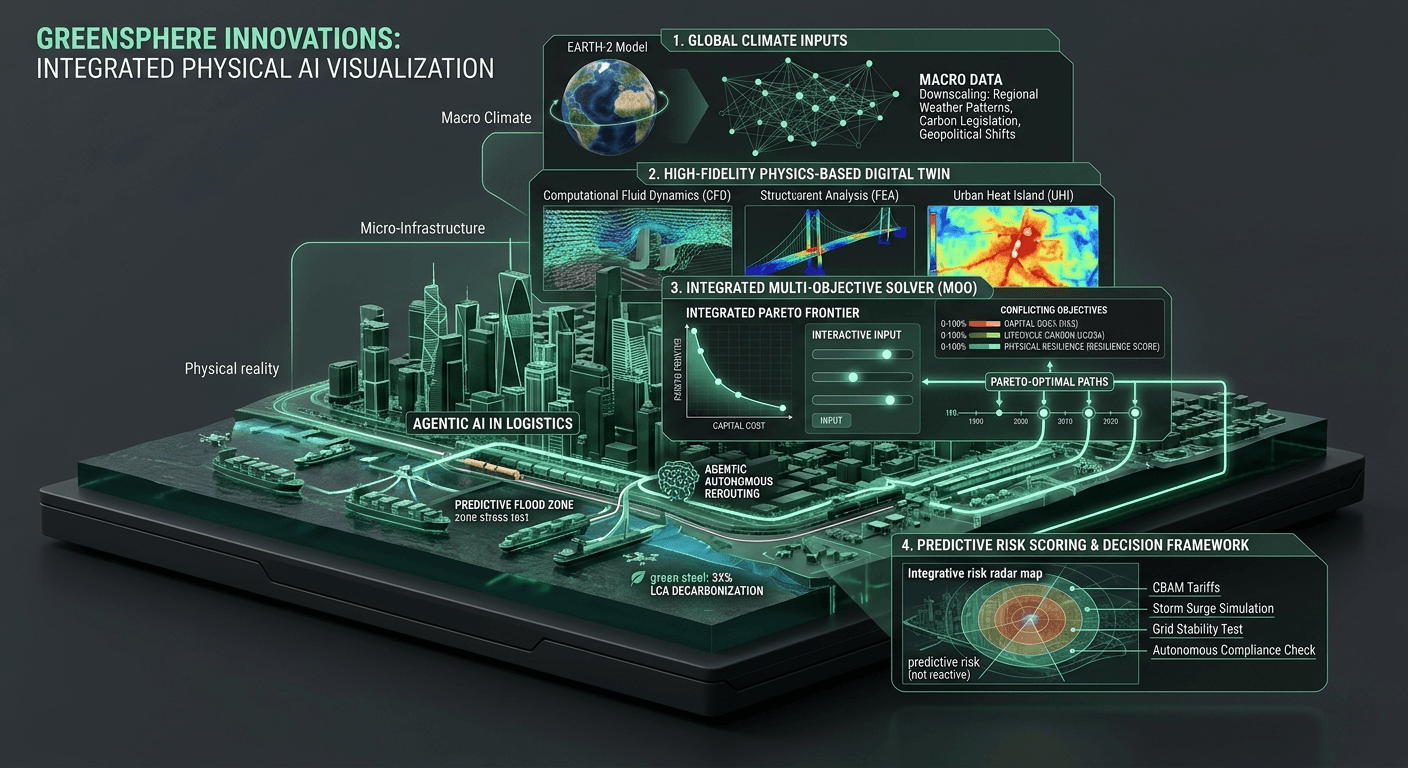

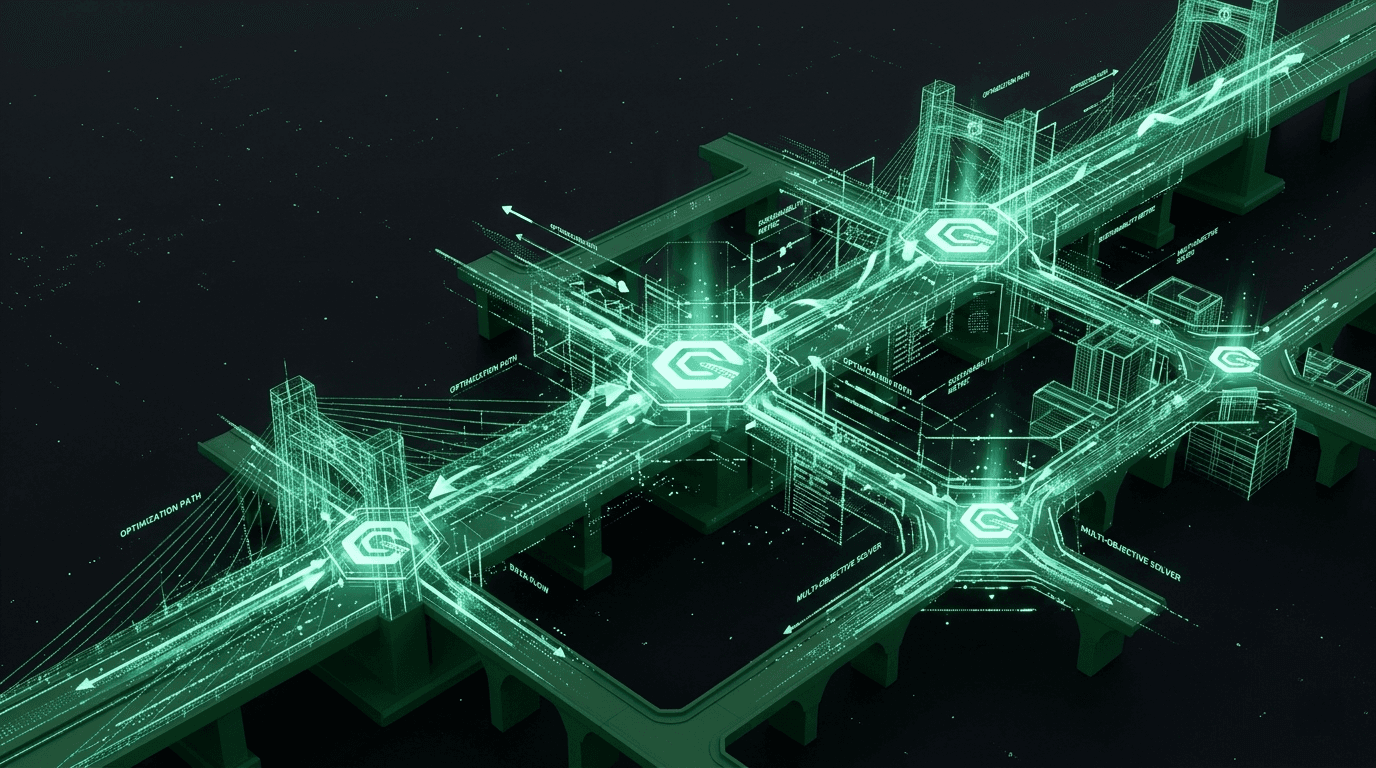

This is the exact computational bottleneck that GreenSphere Innovations was founded to eliminate. By shifting the computational burden of physics-based modeling away from linear CPUs and onto massively parallel GPU inference cores, we are changing the speed of reality. We are taking rigorous, first-principles physics calculations that used to require a week of rendering and executing them in minutes. This means that enterprise logistics teams and structural engineers no longer have to choose between speed and accuracy. They can run thousands of physics-based permutations in the time it used to take to generate a single statistical report.

The GreenSphere Vision

The future of sustainable infrastructure cannot be built on statistical correlations or historical guesswork. We must engineer our physical world with absolute precision, utilizing tools that respect the harsh physical realities of a changing climate. At GreenSphere, we are actively bridging the gap between heavy civil engineering and high-performance computing to make real-time, physics-based digital twins a reality. We are giving builders the computational power to optimize not just for the statistical average, but for the physical absolute. By prioritizing fundamental mechanics over predictive algorithms, we are ensuring that the green infrastructure of tomorrow is not just theoretically optimal, but physically unbreakable.