Multi-Objective Optimization for Smart Cities

The term "Smart City" has been heavily commodified over the last decade. For years, the enterprise tech industry has used the phrase to sell incremental digital upgrades—Wi-Fi in public parks, localized traffic cameras, and digital dashboards that monitor municipal water usage. While these are positive civic improvements, they do not constitute a truly "smart" ecosystem. A dashboard that simply reports that a city is experiencing a grid failure or a traffic gridlock is fundamentally reactive. It is an observational tool, not an engineering solution.

A genuine smart city is not just a collection of sensors; it is a highly integrated, dynamic organism. It is an interconnected network of physical systems—energy distribution, water logistics, transit routing, and structural health—that constantly and autonomously optimize themselves. However, building this level of municipal autonomy requires solving one of the most complex mathematical challenges in systems engineering. We cannot simply tell a city to "optimize." We have to provide the computational architecture to balance violently competing interests.

The Illusion of Single-Metric Optimization

When municipal planners attempt to improve an urban environment, they often fall into the trap of single-metric optimization. This occurs when a city attempts to solve a massive systemic issue by isolating one variable and optimizing it to the absolute maximum, entirely ignoring the cascading physical effects on the rest of the ecosystem.

Consider urban traffic flow. If an algorithm is tasked with optimizing a city grid strictly for the fastest possible transit times, it might route thousands of heavy freight trucks through dense, low-income residential neighborhoods to bypass highway congestion. Transit time decreases, but localized carbon emissions and particulate pollution in that neighborhood skyrocket. Conversely, if you optimize an energy grid strictly for the absolute lowest operational cost, you might strip away critical redundancies. The grid looks highly efficient on a spreadsheet in October, but when an unprecedented heat dome settles over the city in July, that "optimized" grid collapses under peak load, leaving millions without life-saving air conditioning.

When you optimize a massive physical system for only one variable, you almost guarantee a catastrophic failure somewhere else in the network. A city cannot be optimized for just cost, or just carbon, or just speed.

The Mathematics of the Pareto Frontier

To build a resilient smart city, planners must rely on Multi-Objective Optimization (MOO). MOO is a mathematical framework designed to handle scenarios where multiple, often conflicting objectives need to be achieved simultaneously. In the context of civil engineering and urban planning, those competing objectives are usually capital cost, lifecycle carbon emissions, and civilian resilience.

Because these objectives conflict—building a highly resilient sea wall inherently costs more money and requires more carbon-heavy materials—there is rarely a single "perfect" solution. Instead, MOO algorithms search for what is known as the Pareto optimal frontier. This is the mathematical threshold where you cannot improve one objective (like lowering carbon) without worsening another (like increasing cost or decreasing safety).

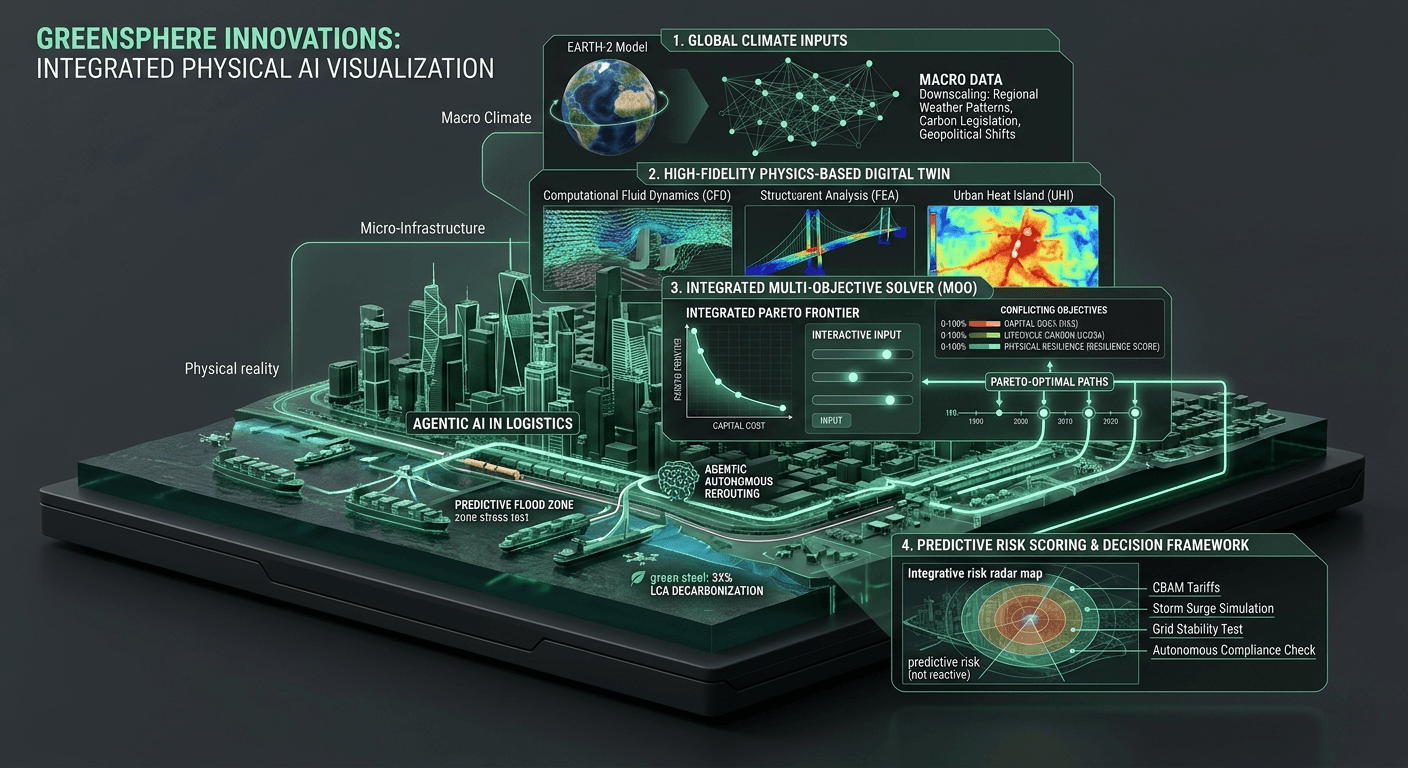

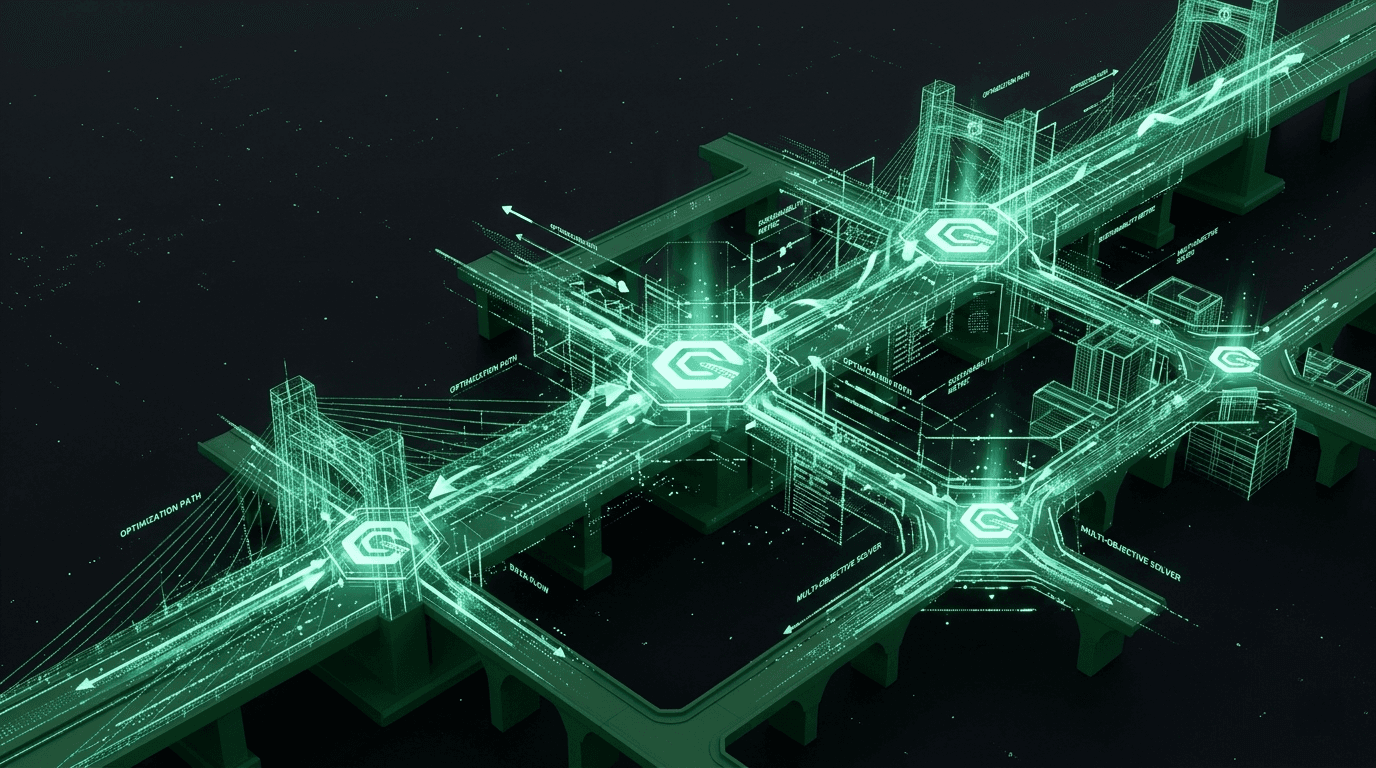

By mapping this Pareto frontier, GreenSphere’s digital twin architecture gives city planners the exact mathematical trade-offs of their decisions. If a city council is debating the layout of a new transit hub, our multi-objective solvers calculate tens of thousands of architectural and logistical permutations. The system discards the inefficient designs and presents only the highly optimized solutions that perfectly balance the city's ESG goals with its budget and safety mandates.

GPU Acceleration at the Municipal Scale

Calculating the Pareto frontier for a single commercial building is computationally heavy. Calculating it for an entire interconnected smart city is historically impossible using legacy hardware. The sheer volume of data—millions of vehicles, fluctuating energy loads, micro-climate weather patterns, and real-time structural stresses—creates a multi-dimensional matrix that brings traditional, linear, CPU-bound servers to a grinding halt.

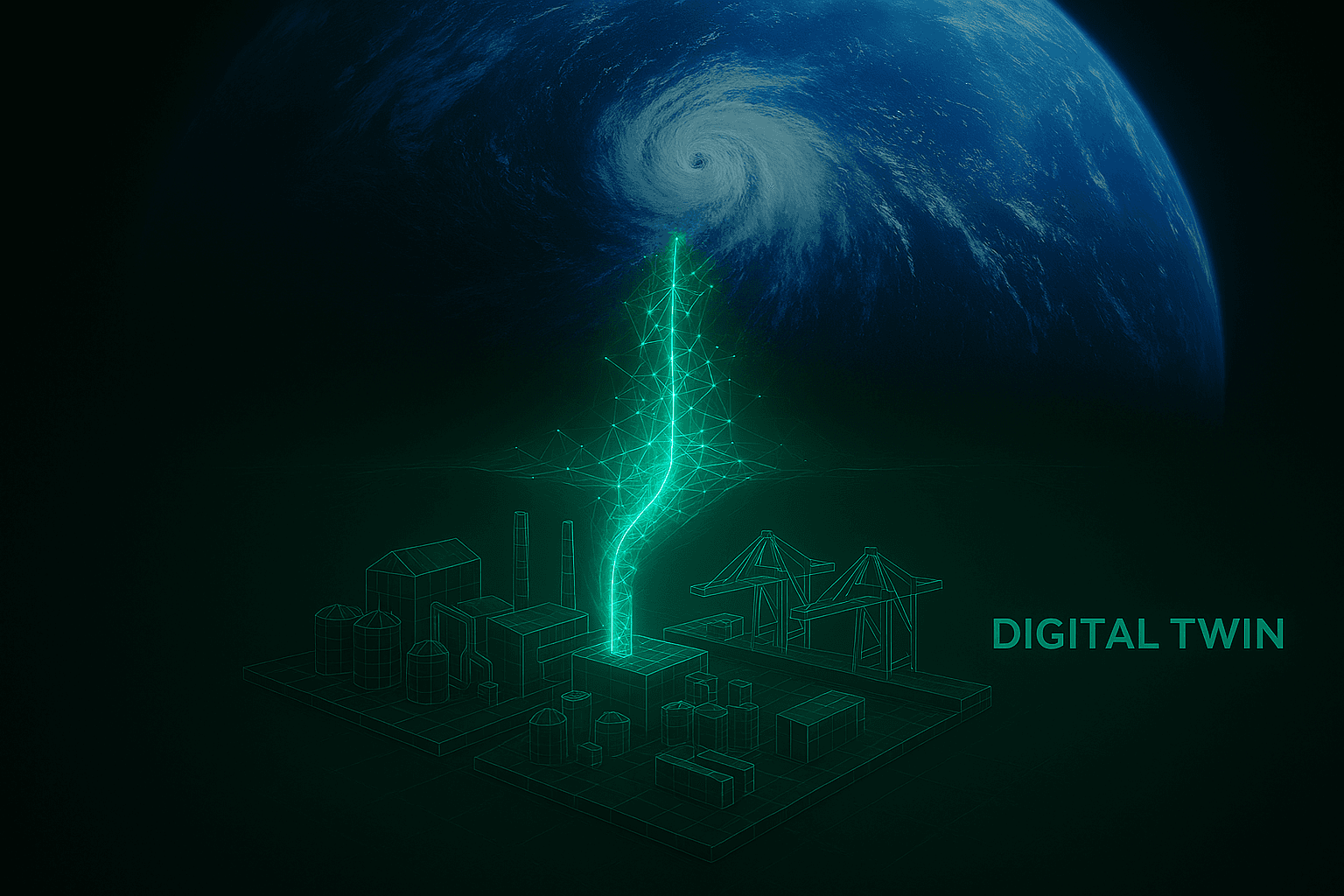

This is the exact computational bottleneck that prevents cities from becoming truly "smart." If a storm is approaching and the city needs to dynamically re-route traffic and power loads to minimize disruption, an algorithm that takes six hours to run is useless.

At GreenSphere Innovations, we are powering urban MOO with our native GPU Inference Core. By shifting municipal calculations to massively parallel processing, we allow cities to run multi-objective optimizations in absolute real-time. We drop the inference latency from hours to milliseconds. This means a city’s digital twin can ingest a sudden spike in thermal data, calculate the necessary load-shedding across a million residential nodes to prevent a blackout, balance that action against carbon-intensive backup generators, and execute the optimal response before a human operator even registers the anomaly.

The GreenSphere Vision

We are rapidly approaching a tipping point in urban development. As populations densify and the climate becomes increasingly hostile, the margin for error in city planning is disappearing. We can no longer afford to build cities based on guesswork, political expediency, or single-metric illusions.

At GreenSphere, we believe that the cities of tomorrow must be engineered with uncompromising mathematical precision. By bringing the raw power of GPU-accelerated Multi-Objective Optimization to the municipal level, we are giving urban planners the ultimate computational engine. It is time to move past the era of the "connected" city and build the era of the optimized, resilient, and sustainable metropolis.