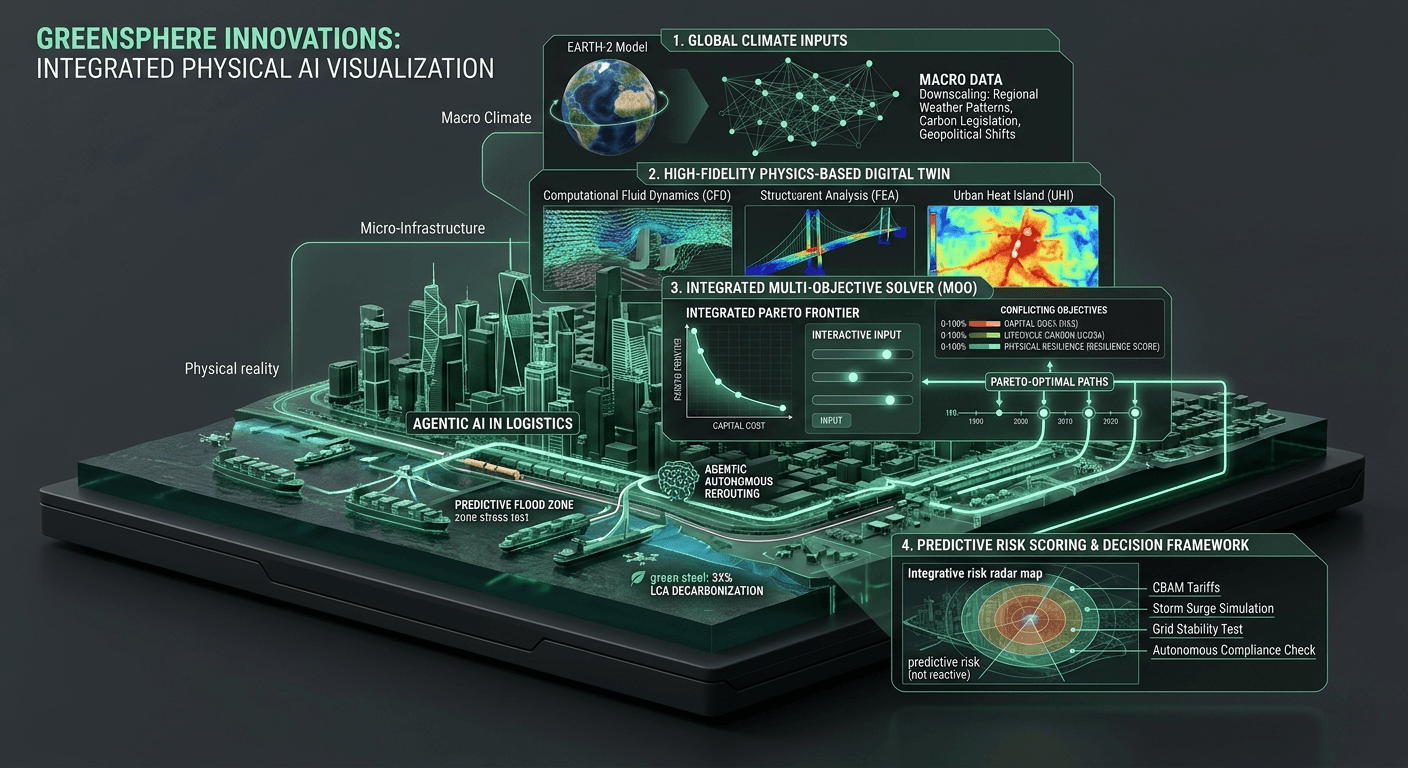

GPU vs CPU: The Math Behind Resilient Infrastructure

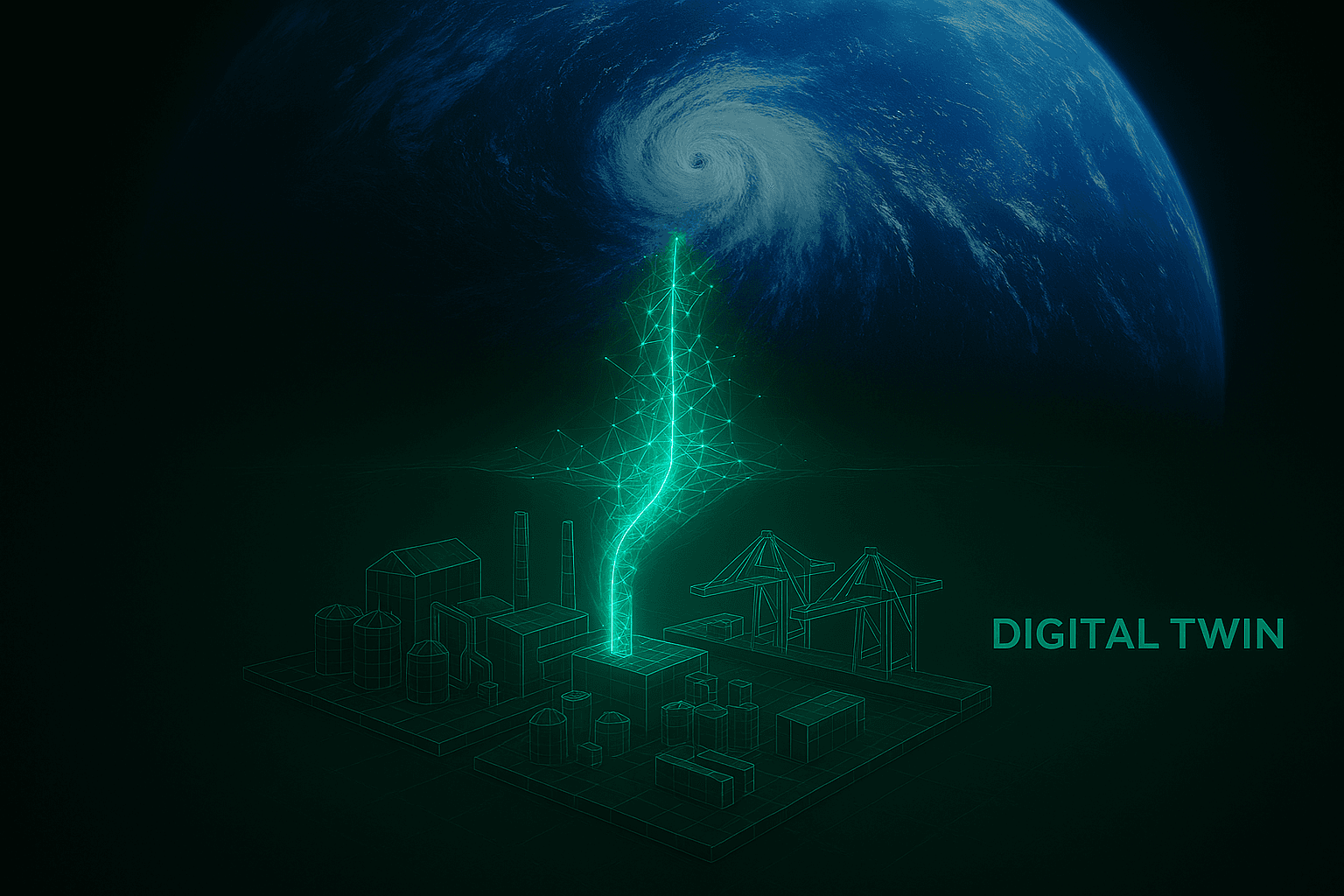

At the heart of every towering skyscraper, expansive logistics network, and resilient coastal seawall is a fundamental truth: civil engineering is ultimately an exercise in applied mathematics. Before a single physical material is procured or a foundation is poured, an engineer must mathematically prove that the structure will survive the forces of nature. For decades, the computational tools used to calculate these forces were sufficient. But as we enter an era defined by extreme climate volatility and urgent decarbonization mandates, the math has fundamentally changed.

We are no longer just calculating whether a building will stand up under normal conditions. We are calculating how a million-node structural steel frame will react to an unprecedented Category 5 hurricane, while simultaneously optimizing that frame to use the absolute minimum amount of high-carbon material. This is a staggering mathematical burden, and it is exposing a critical vulnerability in the built environment: our legacy hardware architecture is failing us. To build the resilient infrastructure of the future, we must understand the profound mathematical difference between Central Processing Units (CPUs) and Graphics Processing Units (GPUs).

The Linear Limitations of Legacy CPUs

Virtually all traditional civil engineering software—from standard CAD programs to legacy structural analysis tools—was designed to run on CPUs. A CPU is an incredibly sophisticated calculator. It is designed to execute highly complex instructions exceptionally fast, but it does so sequentially. It processes one operation after another in a linear queue.

Imagine a CPU as a single, incredibly fast sports car making deliveries across a city. It can reach its destination very quickly, but it can only carry one package at a time.

In structural engineering, this sequential processing becomes a massive bottleneck when executing Finite Element Analysis (FEA). FEA is the mathematical method used to predict how a structure reacts to real-world forces (heat, vibration, wind). It works by breaking a massive structure down into hundreds of thousands of tiny, finite elements, forming a complex mesh.

When you run a structural simulation on a CPU, the processor calculates the stress on the first node, resolves the math, moves to the second node, resolves the math, and so on. But physical reality does not happen sequentially. When a massive wind shear hits a suspension bridge, the force is applied to millions of distinct points at the exact same millisecond. Forcing this simultaneous physical reality through the linear pipeline of a CPU causes massive latency. A high-fidelity, dynamic simulation of a climate event can take days or weeks to render on a traditional server.

The Massively Parallel Power of GPUs

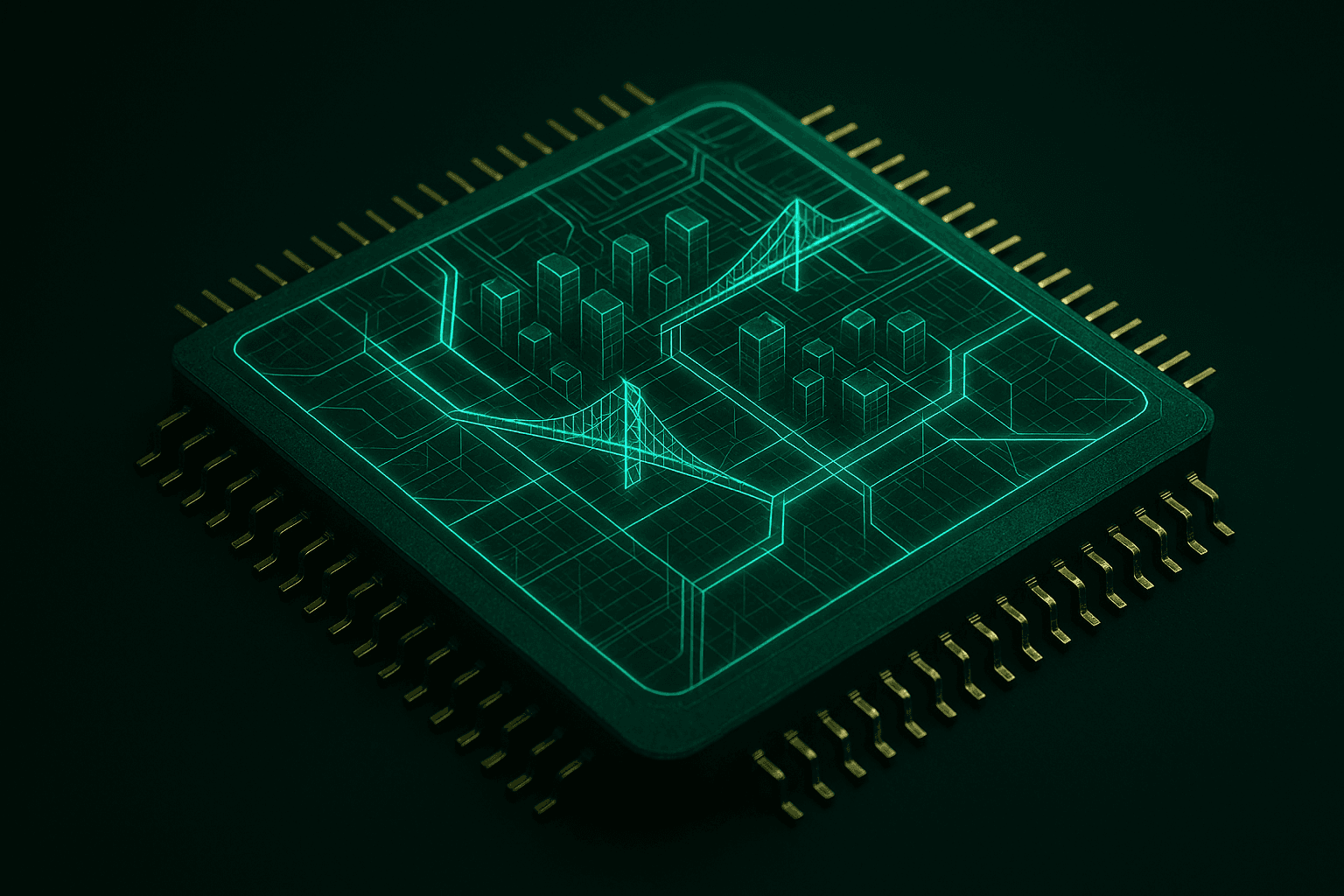

This computational bottleneck is precisely why GreenSphere Innovations has built its architecture around the Graphics Processing Unit (GPU).

GPUs were originally engineered for the video game industry to render millions of independent pixels on a screen simultaneously. Hardware engineers quickly realized that if a chip architecture can calculate the lighting and trajectory of a million pixels at once, it can also calculate the physical force, thermal expansion, and aerodynamic load on a million structural nodes at once.

If a CPU is a single, fast sports car, a GPU is a fleet of ten thousand delivery trucks. They might move slightly slower individually, but they deliver all the packages across the entire city at the exact same time.

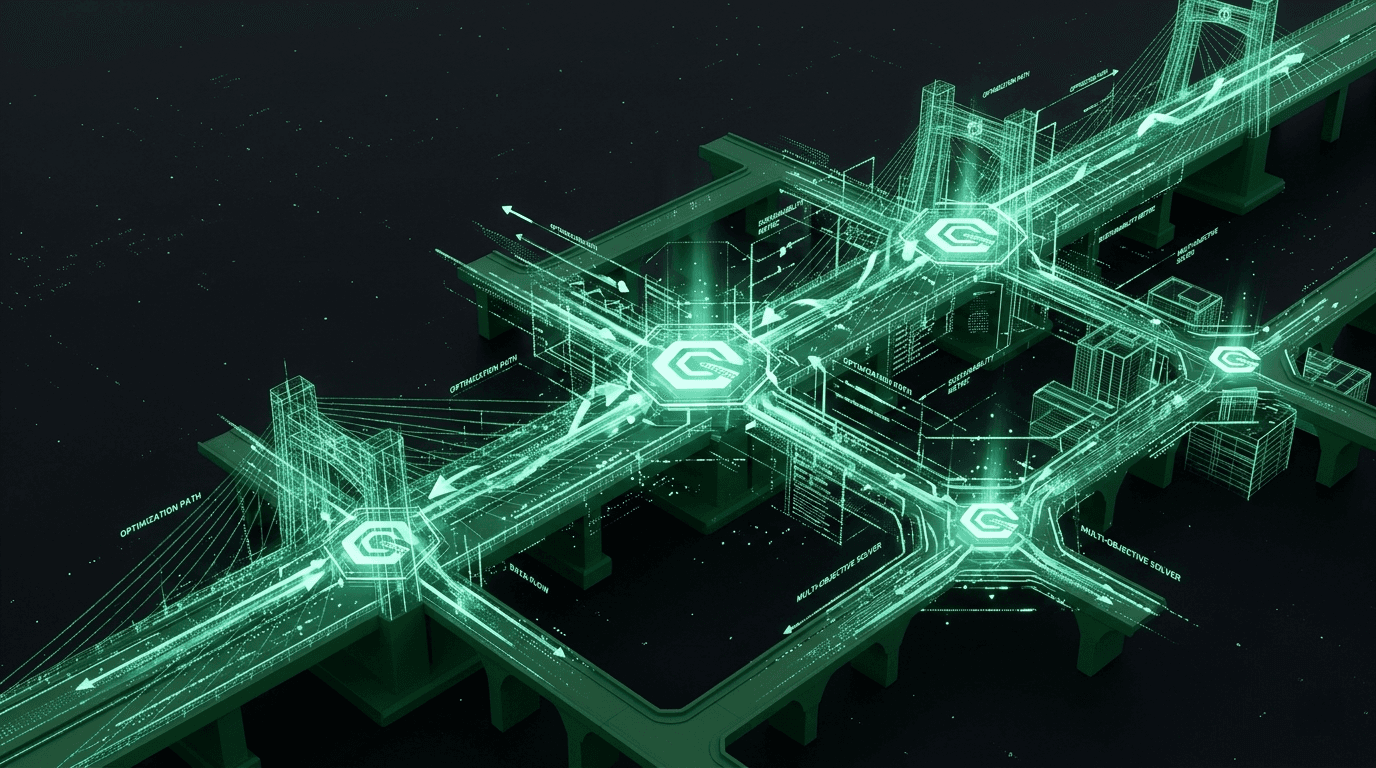

By porting structural mechanics and multi-objective optimization algorithms to a GPU Inference Core, we are shifting from sequential math to massively parallel tensor operations. We are taking the massive matrix multiplications required for Computational Fluid Dynamics (CFD) and FEA and solving them concurrently. The result is a paradigm shift in speed. Simulations that historically brought enterprise servers to their knees are now executed in minutes, or even milliseconds.

The Cost of Slow Computation

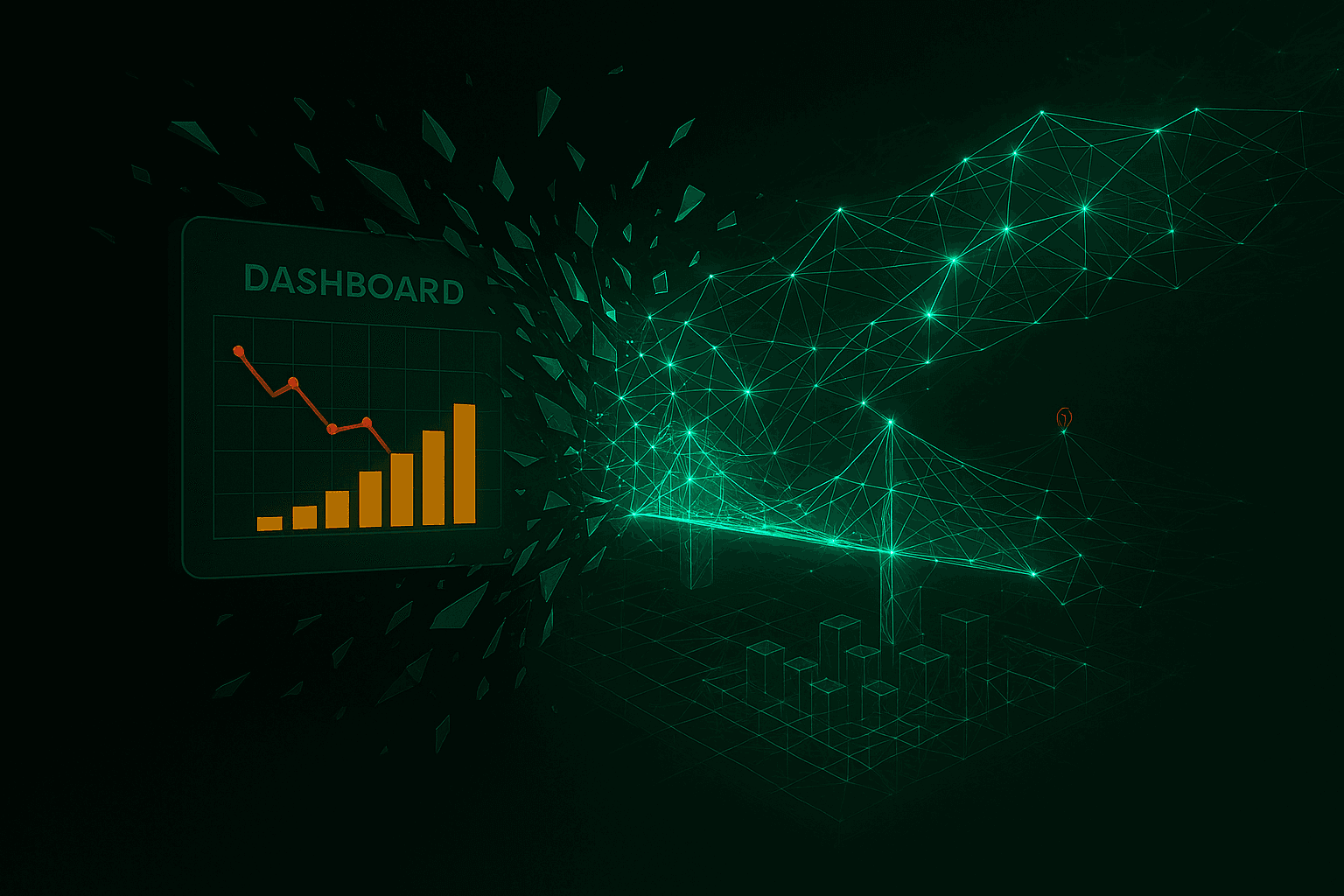

Why does this speed matter? Why is the debate between GPU and CPU critical to the climate crisis? Because in engineering, computational latency directly translates to physical waste and carbon emissions.

When a simulation takes a week to run, an engineering team cannot afford to iterate. They cannot test ten thousand variations of a structural design to find the absolute lowest-carbon material combination. They are forced to run a handful of models, find a design that safely meets the building code, and stop. Because they cannot exhaustively simulate the physical limits of the structure, they compensate for the unknown by over-engineering. They add thicker steel columns and pour deeper concrete foundations "just in case."

This brute-force over-engineering is responsible for millions of tons of unnecessary embodied carbon entering our atmosphere every year. We are destroying the environment simply because our computers are too slow to find the optimal design.

Real-Time Resilience and Multi-Objective Optimization

By shattering the computational bottleneck with GPU architecture, GreenSphere is unlocking true Multi-Objective Optimization (MOO) for the built environment.

When inference latency drops from days to sub-seconds, parametric design becomes a reality. An engineer can interact with a physics-based digital twin in real-time. If they swap a traditional concrete aggregate for a new, sustainable composite, the GPU core instantly recalculates the entire structural integrity matrix, simultaneously updates the lifecycle carbon score, and cross-references the global supply chain for procurement viability.

We allow engineers to actively map the Pareto frontier—the exact mathematical threshold where maximum physical resilience meets the absolute minimum carbon footprint.

The GreenSphere Vision

The challenges of the 21st century cannot be solved with the computational architecture of the 20th century. The math required to save our physical world is simply too heavy for legacy processors to carry. At GreenSphere Innovations, we recognize that software is only as capable as the silicon it runs on. By pioneering native GPU-accelerated computing for systems engineering and physical logistics, we are giving the builders of tomorrow the uncompromising computational power they need to engineer a resilient, sustainable planet. The math has changed, and it is time our infrastructure caught up.