Building a GPU Inference Core for the Built Environment

Every time the engineering community discusses the future of artificial intelligence, digital twins, or sustainable smart cities, the conversation naturally gravitates toward software. We enthusiastically debate algorithms, large language models, and predictive data pipelines. But software does not exist in a vacuum. It is not an abstract concept floating in the cloud; it is deeply bound by the physical constraints of the hardware it runs on. For the past forty years, the software designed to build our physical world—the CAD programs, the structural mechanics simulators, the logistics routing engines—has been built to run almost exclusively on Central Processing Units, or CPUs.

Today, as the aggressive demands of climate resilience and decarbonization fundamentally alter the mandate of civil engineering, that legacy hardware architecture has become the single greatest bottleneck in the built environment.

To understand why, we must look at the mathematical nature of physical reality. A CPU is an incredibly powerful, complex calculator, but it processes information sequentially. It is designed to execute one highly complex instruction after another in a linear queue. This architecture was perfectly sufficient for the static, two-dimensional drafting and basic localized math that defined civil engineering in the 20th century. However, the physical world does not operate sequentially. Reality happens all at once.

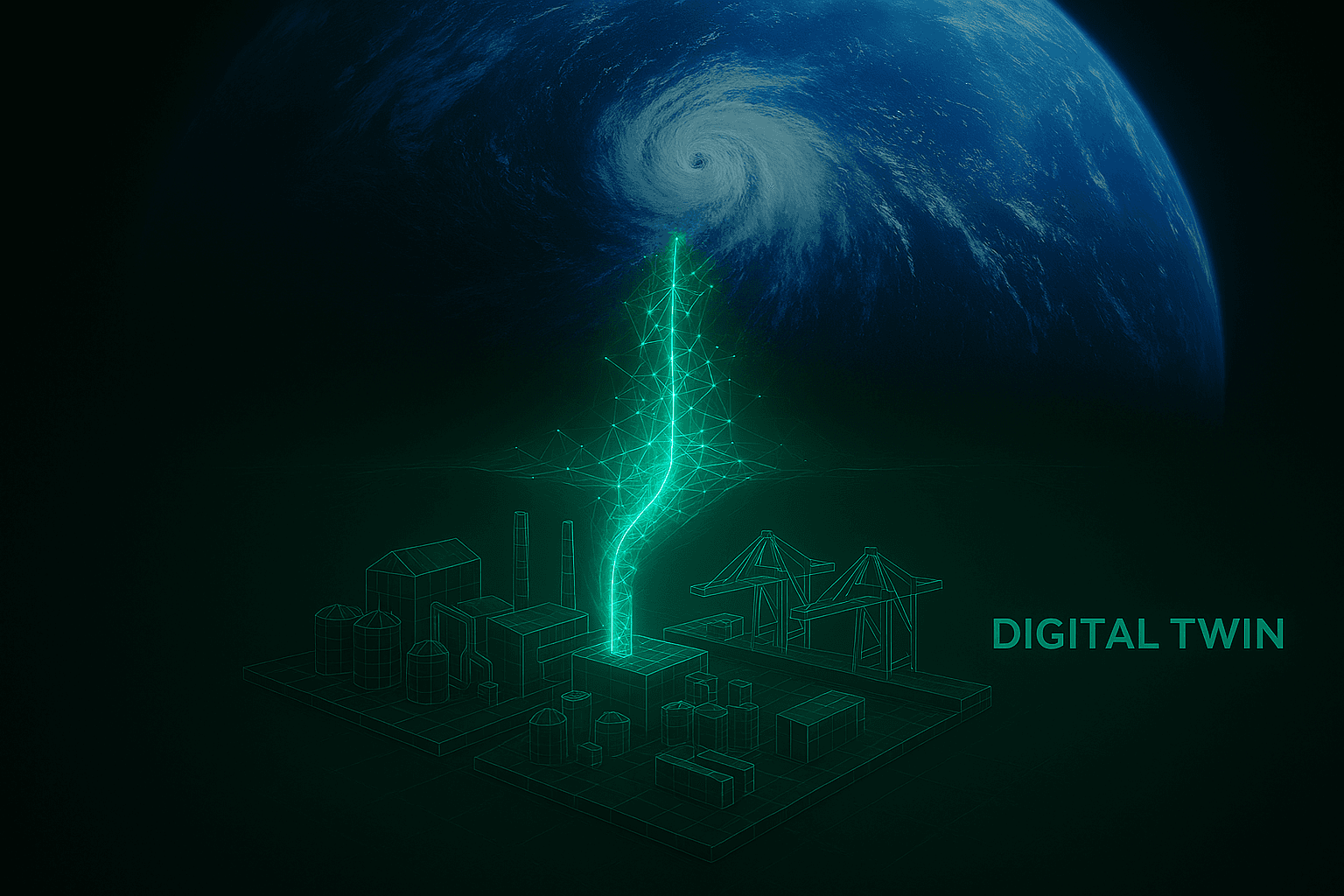

When a Category 5 hurricane strikes a coastal suspension bridge, the wind does not apply force to the first suspension cable, wait for the math to resolve, and then move on to the next one. The aerodynamic flutter, the thermal contraction, and the hydraulic shear are applied to millions of distinct structural nodes at the exact same millisecond. To accurately simulate how a structure will react to unprecedented climate extremes, you have to run millions of non-linear differential equations simultaneously. When you force this massively parallel physical reality through the linear, sequential processing pipeline of a legacy CPU, the system inevitably chokes. A comprehensive lifecycle carbon analysis or a high-fidelity structural stress test can take days or even weeks to render.

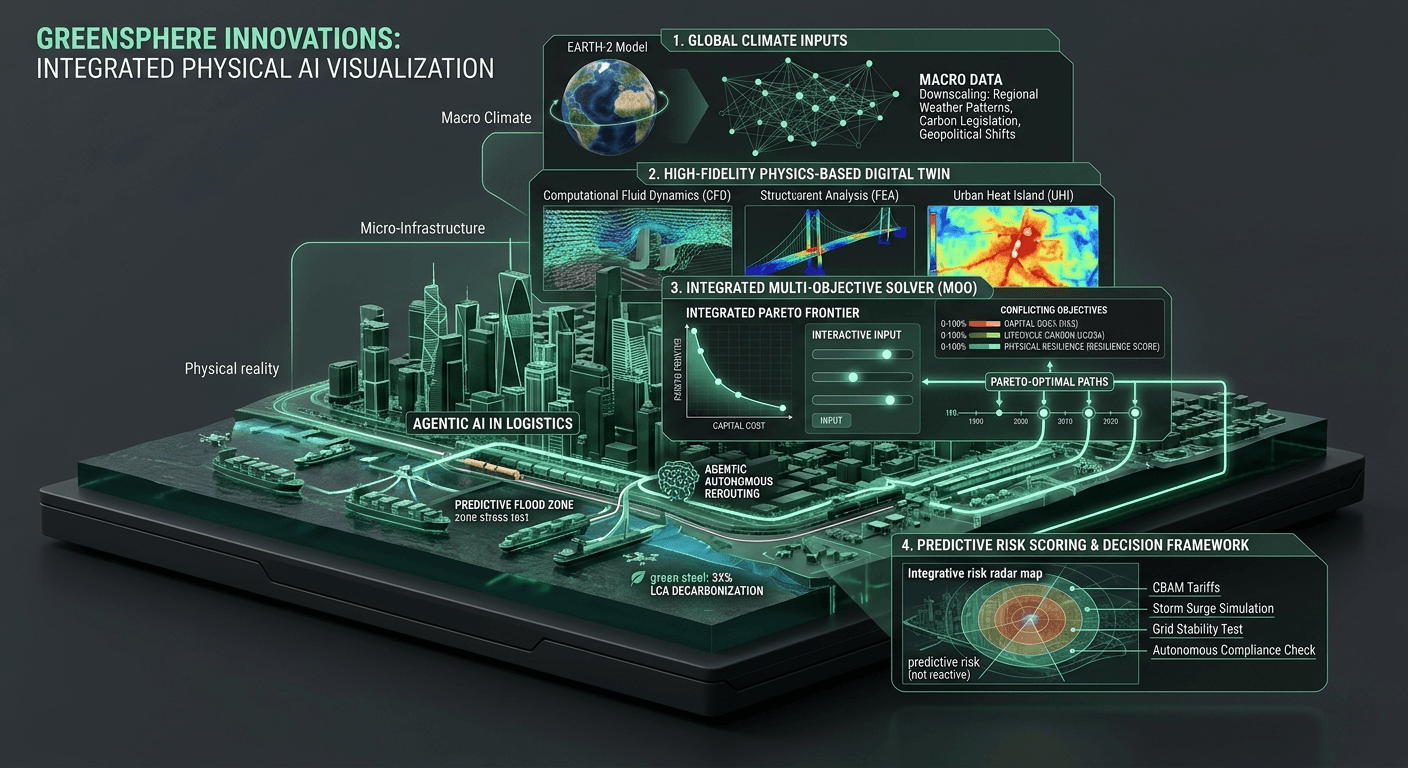

At GreenSphere Innovations, we realized that we could not build the sustainable infrastructure of the future using the computational architecture of the past. To shatter this bottleneck, we had to fundamentally re-architect how physical computation is handled at the enterprise level. We stopped relying on sequential logic and built a native GPU Inference Core specifically designed for the built environment.

Graphics Processing Units (GPUs) were originally engineered to render millions of independent pixels on a screen simultaneously. Over the last decade, high-performance computing has hijacked this architecture, realizing that if a chip can calculate millions of pixels at once, it can also calculate millions of physical forces, material stresses, and logistical routing parameters at once. By leveraging this massive concurrency, we are able to execute highly optimized tensor operations that process structural mechanics and carbon matrices in parallel.

It is critical to note that building a GPU inference core for civil engineering is not as simple as taking legacy software and plugging it into a faster server. If the underlying code is written for linear processing, a GPU will not save it. You cannot put a jet engine on a horse-drawn carriage and expect it to break the sound barrier. At GreenSphere, we have built our computational engine from the ground up, writing native architecture that explicitly leverages parallel processing frameworks.

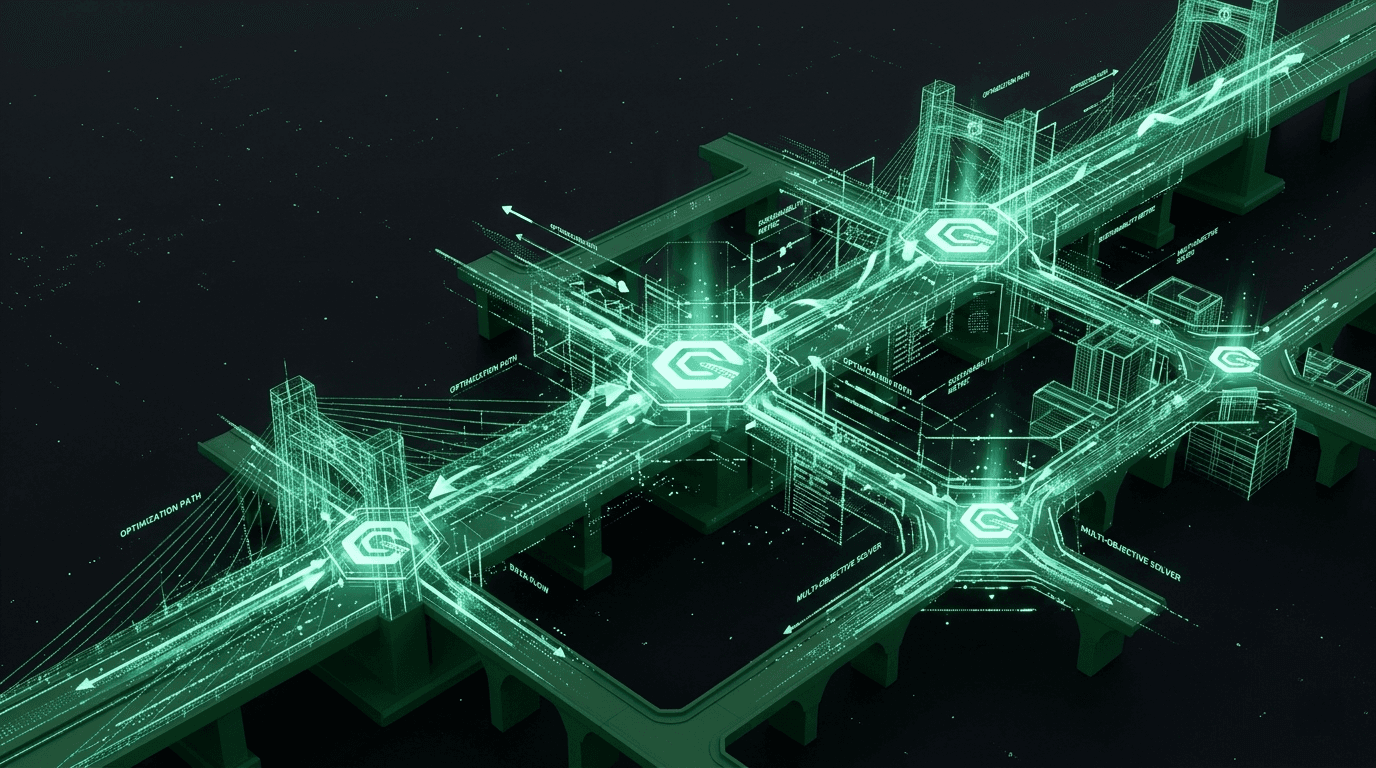

The result of this foundational shift is nothing short of revolutionary for the systems engineer. We are transitioning the entire industry from a paradigm of "batch processing" to a paradigm of "interactive engineering." When an architect or structural engineer makes a design change—perhaps swapping traditional steel girders for a new, low-carbon composite material—they no longer have to submit the model to a server farm and wait overnight for the environmental impact and structural safety reports. Our GPU inference core processes the entire multi-objective optimization problem in absolute real-time. The lifecycle carbon score updates instantly. The physical resilience threshold recalculates in sub-seconds.

This speed is what makes true sustainability possible. When you drop the latency of complex simulation from days to milliseconds, you allow engineers to explore millions of permutations. You give them the computational freedom to find the absolute mathematical Pareto-optimal design that perfectly balances capital cost, human safety, and embodied carbon.

The GreenSphere Vision

We are moving into an era where the margin for error in civil engineering is vanishingly small. The climate is shifting, global supply chains are increasingly fragile, and the carbon budget of our planet is nearly exhausted. To navigate these compounding crises, we must equip our best engineers with uncompromising computational power. By building a dedicated GPU inference core for the built environment, GreenSphere Innovations is ensuring that the physical limitations of legacy hardware will never again stand in the way of a resilient, sustainable future.