Bridging Civil Engineering and Data Science

For decades, an invisible but rigid wall has separated the physical from the digital. On one side stood civil engineering—a discipline defined by the tangible. It is the world of statics, material science, structural mechanics, and concrete. On the other side stood advanced computer science—a realm of abstract algorithms, massive datasets, and cloud architecture. Historically, these two fields operated in complete isolation. The structural engineer did not need to understand database architecture, and the data scientist did not need to understand the shear strength of a structural steel beam.

Today, that isolation is not just inefficient; it is actively preventing us from solving the climate crisis. We cannot build the resilient, low-carbon infrastructure of the future using solely the traditional tools of the past. The defining challenge of our generation requires a complete, uncompromising fusion of these two disciplines.

The Limitations of the Physical Sandbox

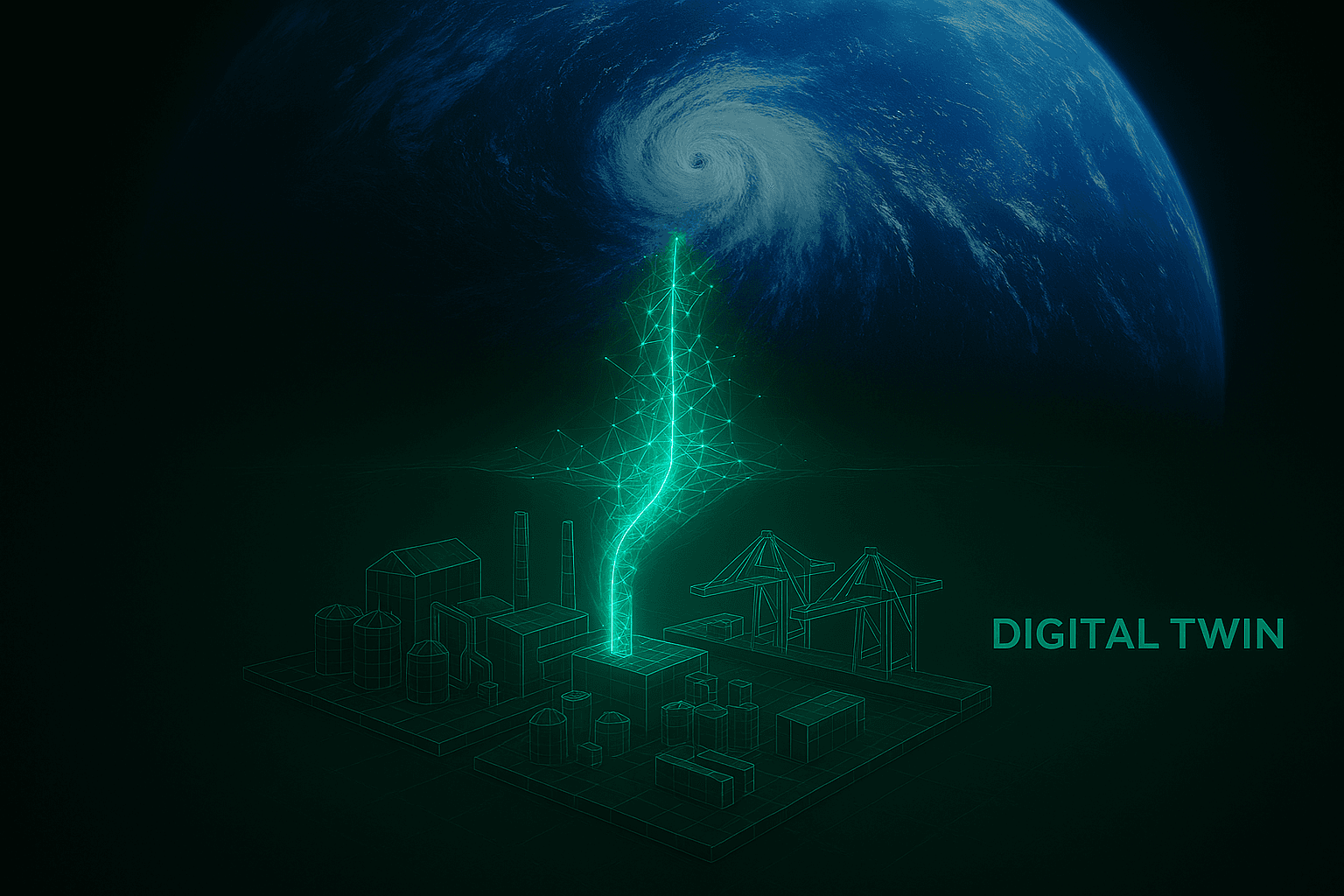

Civil engineering is bound by the uncompromising laws of physics. When designing a suspension bridge or a transit hub, failure is not a software bug that can be patched in the next update; it is a catastrophic physical event. Because of this reality, the industry is inherently conservative, relying heavily on established building codes and historical precedent. However, as extreme weather events accelerate and the mandate for rapid decarbonization grows, this historical precedent is breaking down. We need to iterate and optimize our physical environment faster than physical reality allows.

This is exactly where the traditional civil engineering toolkit hits a hard ceiling. You cannot physically build and test ten thousand versions of a city grid or a global supply chain to see which variation has the lowest lifecycle carbon impact. To achieve that level of multi-objective optimization, we have to move the physical world into a digital sandbox. We have to transition from merely managing physical materials to managing complex technological systems. This requires a fundamental shift in how we approach infrastructure, treating a city or a logistics network not as a collection of static concrete objects, but as a living, dynamic, data-generating network.

The New Analytical Toolkit for Infrastructure

Bridging this gap requires a new breed of systems engineer—professionals who can speak the language of structural mechanics while wielding the analytical power of modern data science. The tools required to design sustainable infrastructure no longer stop at CAD software or basic load calculators.

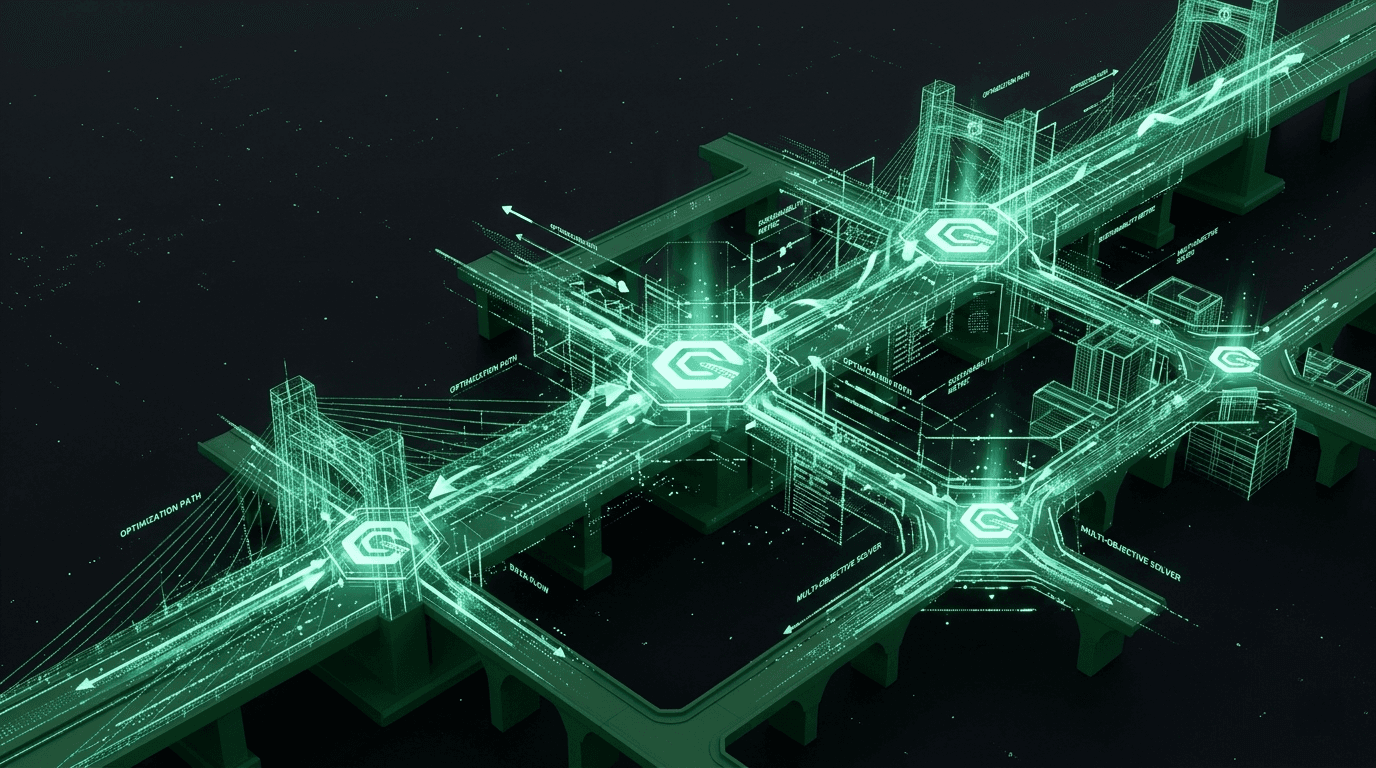

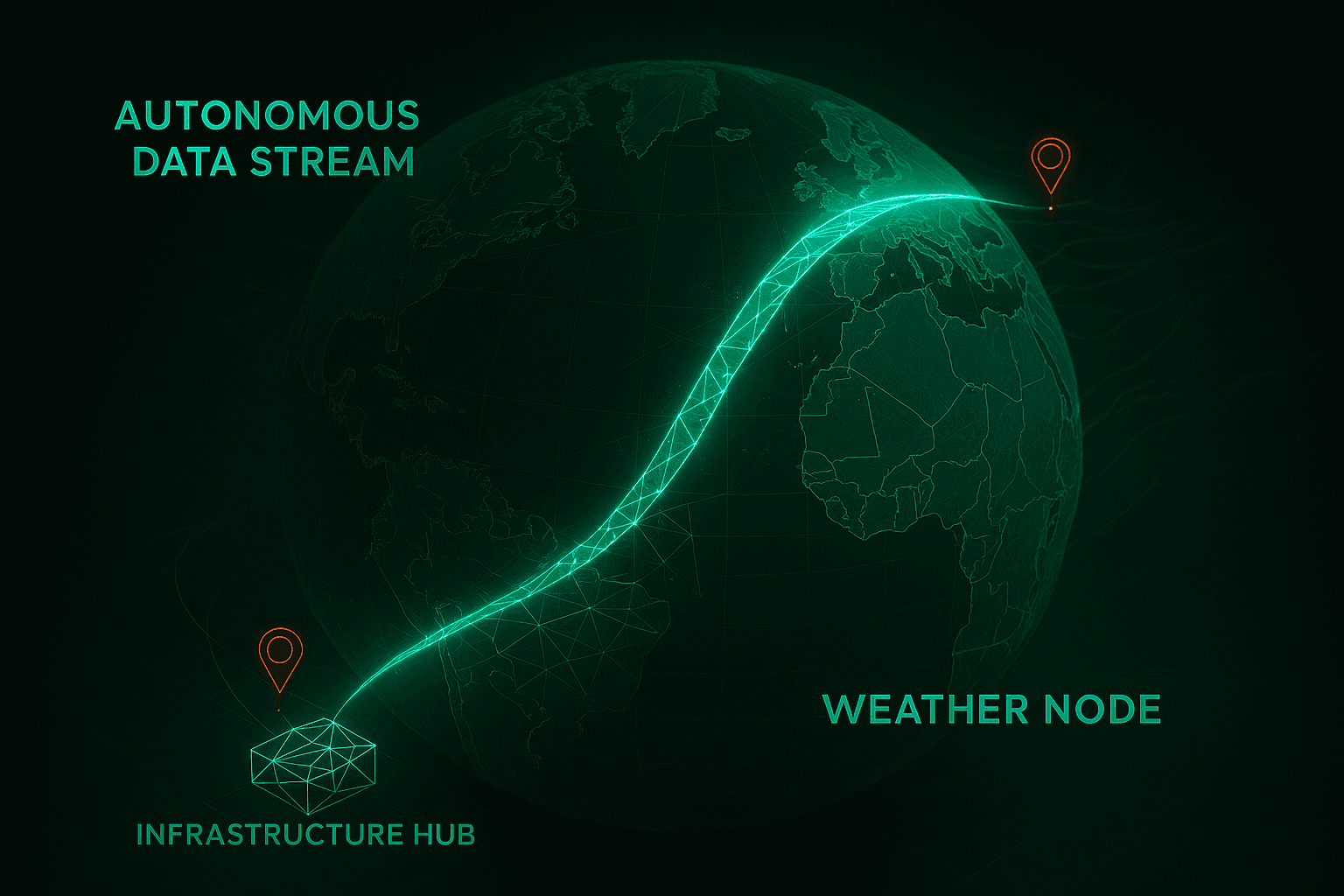

To truly understand and optimize the built environment, we must ingest and analyze massive streams of operational data. This means integrating robust technical toolkits directly into the engineering workflow. It requires utilizing languages like Python and R to write the scripts that process massive environmental datasets, and deploying SQL to manage the complex relational databases that track global supply chain resilience. It means using advanced visualization platforms like Power BI and Tableau to translate millions of rows of structural stress data into actionable, executive-level insights.

This is not just IT work; it is the new foundation of structural analysis. By applying data-driven optimization to complex physical systems, we can identify carbon bottlenecks and structural vulnerabilities long before the first foundation is poured.

Systems Architecture Over Algorithm Design

As we integrate these fields, there is a common misconception that every civil engineer must become a deep-learning researcher. This is fundamentally untrue. The goal is not to force infrastructure experts to reinvent machine learning algorithms from scratch. Rather, the future belongs to the systems architect—the professional who understands how to apply existing, powerful data analytics to heavy physical realities.

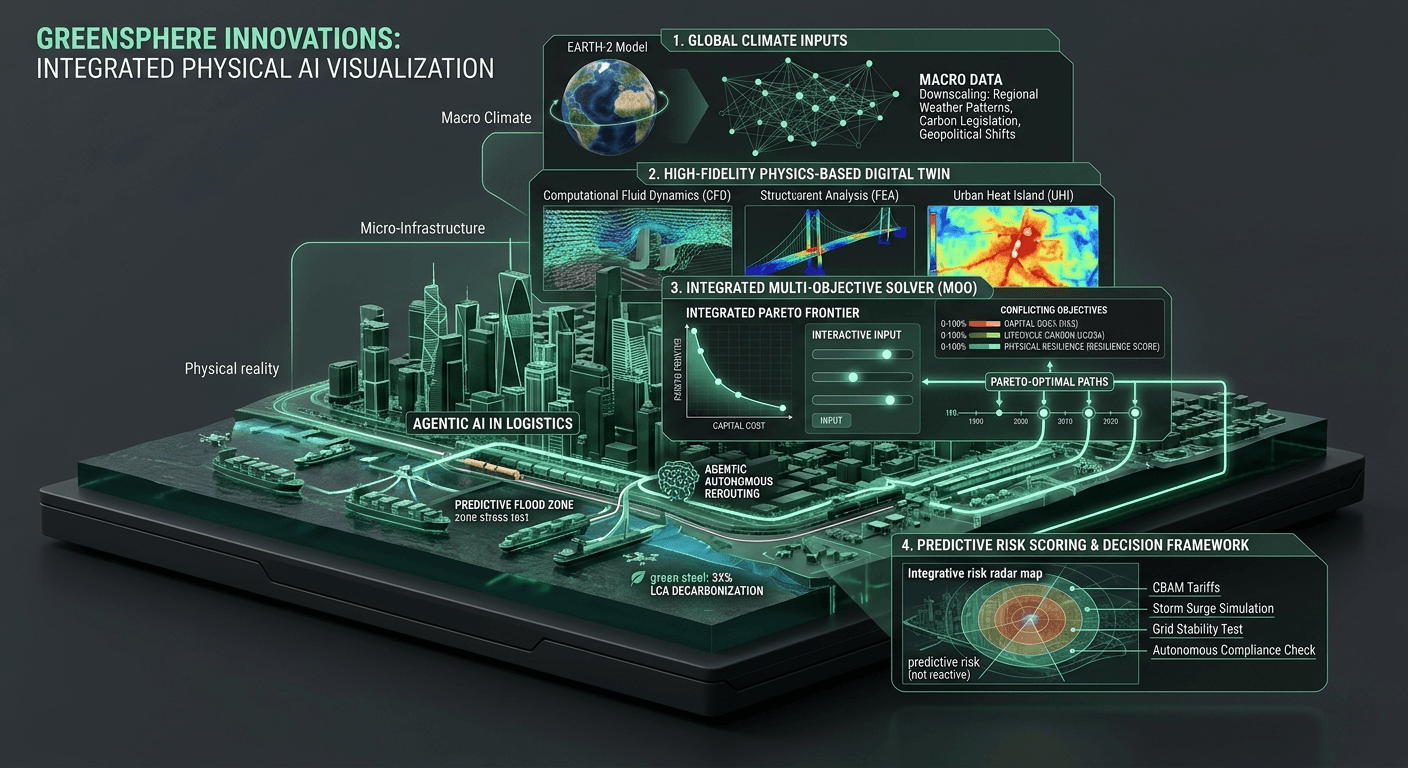

It is about knowing how to structure a multi-variable optimization problem so that a GPU inference core can solve it. It involves orchestrating the data pipelines that feed real-time climate models into structural digital twins, and tracking the provenance of sustainable materials across a fragmented global logistics network. The true innovation lies in the application and management of technology. By focusing on rigorous systems engineering, we ensure that the data science we deploy respects the unyielding physical laws of civil engineering, rather than just chasing statistical correlations that fall apart in the real world.

The GreenSphere Vision

The greatest advancements of the next twenty years will not come from isolating software in the cloud or confining engineering to the dirt. They will come from the exact intersection of these two domains.

At GreenSphere Innovations, our entire DNA is built on this intersection. We are tearing down the silos that have historically separated the hard hat from the hard drive. By equipping infrastructure developers and logistics teams with enterprise-grade data architecture, we are empowering them to design systems that are mathematically optimized for both physical resilience and aggressive decarbonization. The civil engineers of the future must be data scientists of the physical world, and we are building the exact computational platform they need to engineer a sustainable planet.